- Blog

- 06.03.2019

- Data Fundamentals

Improving Python Efficiency in Matillion: Offload Large Python Scripts

Matillion has the functionality to run Python Scripts as part of a Matillion job run. This is commonly used for setting variable values, running quick queries on the target database using database cursors, and connecting to other AWS services. However, there are some circumstances where the amount of processing required by the Python script may affect the resources on the EC2 instance which is used to host the Matillion application. Examples of where this is the case include processing large files such as running XML to JSON conversion or zipping/unzipping large files or running API Calls which require or return a lot of data. Running the Python script outside of Matillion can minimize the impact on the rest of the Matillion server. This results in Matillion jobs not running when required or the UI becoming unresponsive. Another reason for running the scripts remotely may be because of corporate requirements around security or execution isolation.

In this blog, we will look at some options for running Python scripts outside of the Matillion instance using the Bash Script component. In this example, we are using a simple Python script which is used to convert an XML file to a JSON file so it can be loaded into the target database which can require a lot of memory and processing power to convert large files.

- SSH

- AWS Lambda

- AWS Glue

SSH

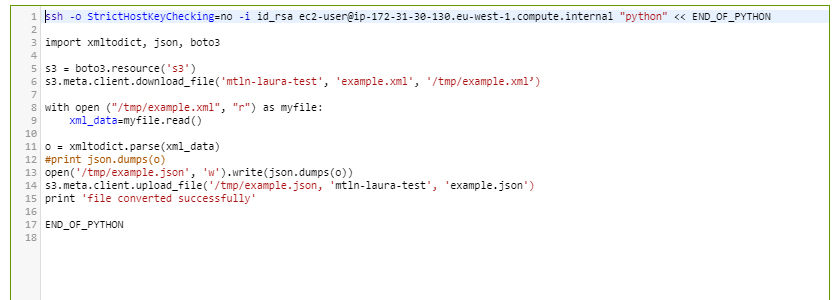

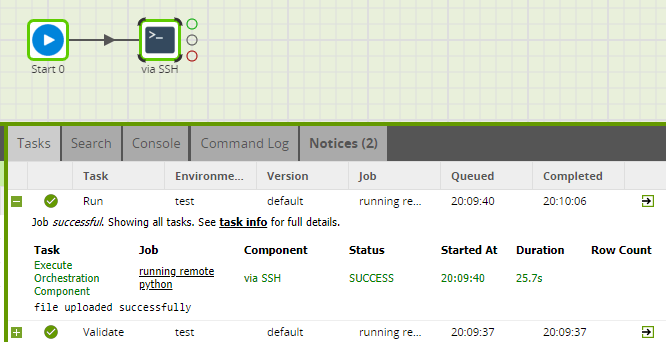

It’s possible to run the script remotely on another EC2 instance using an SSH command to connect to the instance. If required, the AWS CLI can even be used to start and stop the instance on demand. The SSH command is used to connect to the instance, using a key stored on the Matillion Server. The script is run using the “Python” command. In this example, the Python script is passed through to the remote server using a “heredoc”.

For more details on the Python script see here.

The result of the Python script will be printed in the Bash window:

Considerations

The advantages of using SSH to remotely run the script are:

- Control over the memory allocated on the remote server to the Python Script.

- All libraries can be installed and maintained on the remote server.

- The version of Python can be changed as required.

Disadvantages are:

- Cost and maintenance implications of a new server.

- Cannot reuse Matillion connection into Amazon Redshift to run commands (Database Cursor) although a new connection could be used.

- Variables are not available in the global namespace, nor can they be updated i.e. it’s not possible to reuse the Matillion “context” object provided. However, referencing variables using the syntax ${this_syntax} will resolve to the variable value before the Bash script executes.

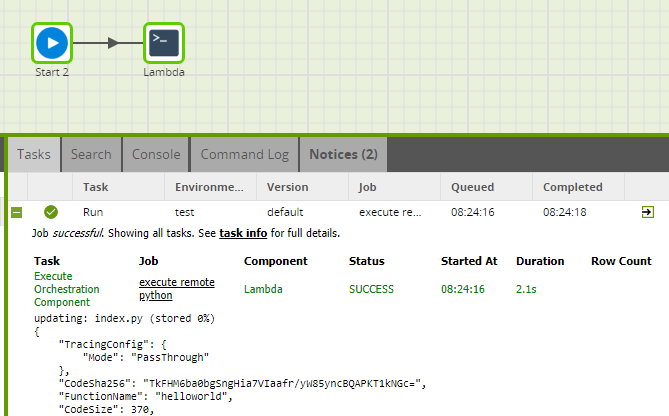

AWS Lambda

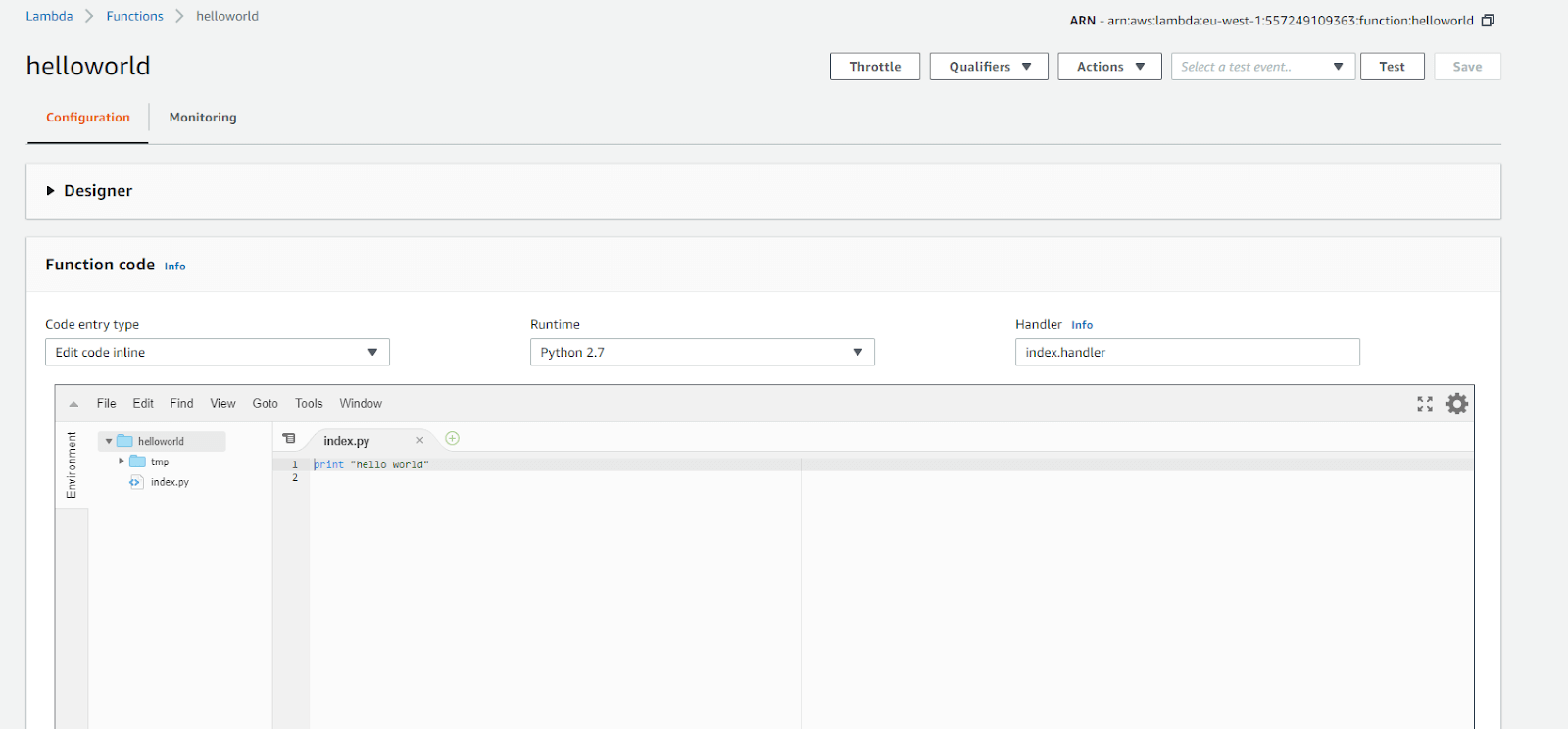

AWS Lambda allows you to run code without worrying about servers, you simply pay for the compute time you use. Python 2.7, Python 3.6 and Python 3.7 are amongst the languages supported. To run a Python script in AWS Lambda, a Lambda function must first be created and then run when required. Functions can be created and run through the AWS web console. A simple example function is shown below:

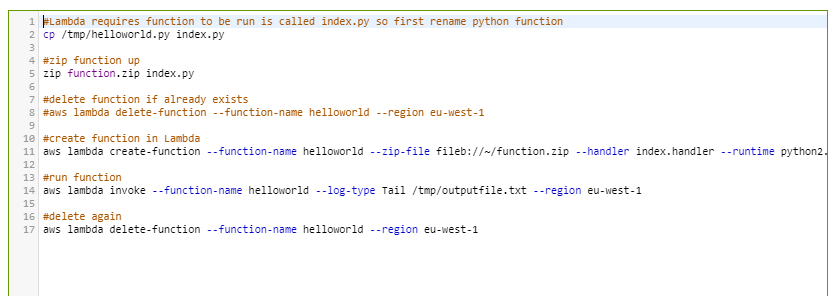

The AWS CLI supports creating a Python function in Lambda and then running it. These CLI commands can be run from a Bash Script component within Matillion. The example script below assumes the ‘helloworld.py’ Python script exists on the Matillion server in the /tmp directory and walks through the steps required to use this to create a Lambda function from it and run it accordingly. Please note the below will redefine the function over and over. If the code does not change, it can simply be run using the run statement:

Note: the AWS Credentials for the Environment used to run this job requires the IAM policy AWSLambdaBasicExecutionRole.

Considerations

Advantages

- No need to worry about provisioning extra servers as required to with SSH.

- Can be cost efficient since you only pay for the compute resources used - there’s no chance of accidentally leaving a server on.

Disadvantages

- Script can’t run for more than 15 mins, meaning this may not be a suitable option for complex scripts or large amounts of data.

- Requires access IAM policy AWSLambdaBasicExecutionRole which may be a security concern

- Dependencies aren’t available in Lambda and must be packaged locally and deployed up to Lambda

- Cannot reuse Matillion connection to run commands on Amazon Redshift (Database Cursor)

- The function output is not displayed in the Task History, instead, it has to be read from a separate file, adding an additional step to your auditing processing.

- Requires AWS Lambda which has a cost implication.

AWS Glue

In general, we recommend taking an ELT approach with Matillion and pushing the bulk of the processing down to the target data warehouse. However, there are certain edge cases where the required work cannot be achieved in the target warehouse, for example processing a lot of unstructured data. Matillion customers have traditionally written Python scripts to handle these edge cases and here we discuss using AWS Glue to run those Python scripts.

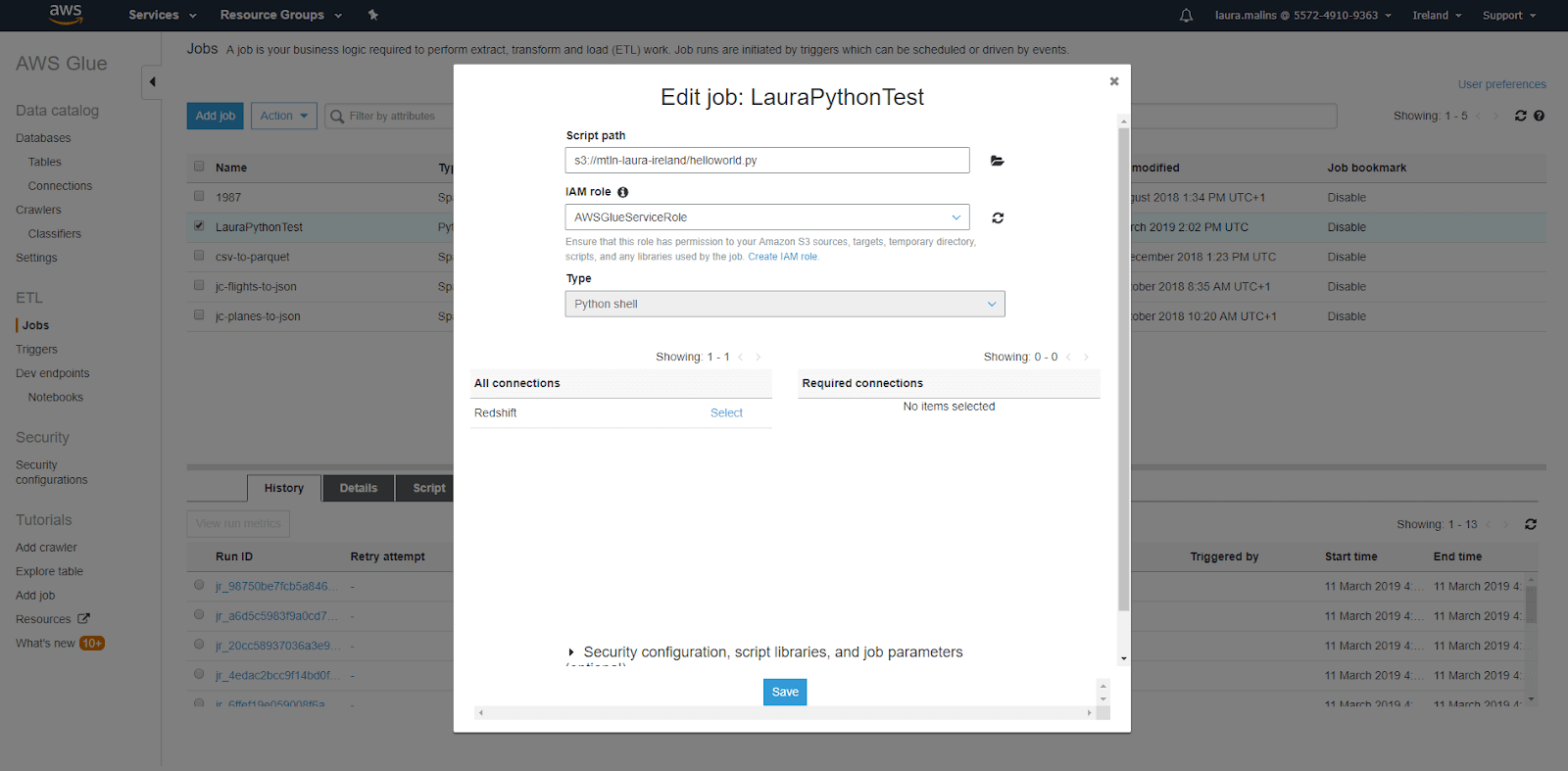

AWS Glue can be used to create and run Python Shell jobs. These are Python Scripts which are run as a shell script, rather than the original Glue offering of only running PySpark. Any script can be run, providing it is compatible with 2.7. As with the Lambda function, first of all, an AWS Glue ETL job must be created, as a Python Shell job, and then executed. The AWS Glue job is created by linking to a Python script in S3, an IAM role is granted to run the Python script under and any connections available connections, such as to Amazon Redshift are selected:

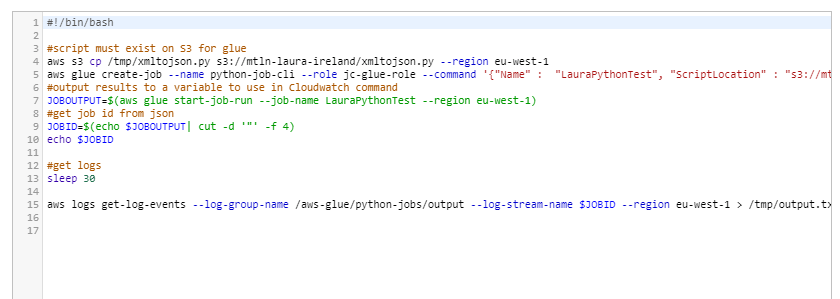

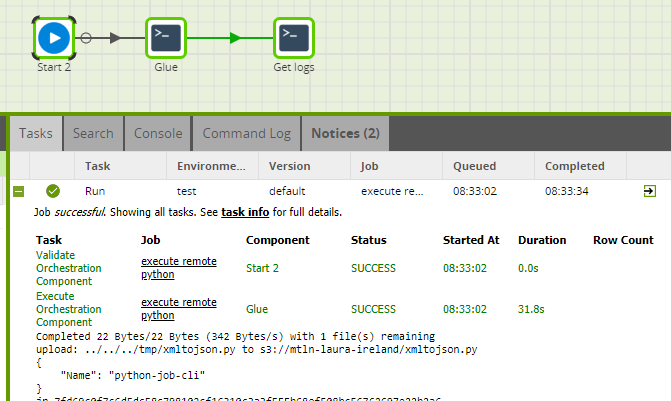

Again, the Glue Job can be created either via the console or the AWS CLI. You can turn this into a Matillion job, which is especially helpful if the Python code is repeatable. Matillion can then tell Glue to run the Python. Below are the steps to create and run the job via the AWS CLI from a Bash script component within Matilion.

In AWS Glue, the output of a job run is written to Cloudwatch. If these are required in Matillion, the output of the command has to be written to a file on the Matillion server.

Note to run this script the Matillion environment requires the below policies:

- AmazonGlueServiceRole

- IAMReadOnlyAccess

Considerations

Advantages

- Can specify a script timeout, which solves the limitation of the Lamba method

- In the Glue script, parameters can be defined and referenced in the Python. When the script is run, values for the parameters can be provided. This can be done by using Matillion variables in the Bash script. This means Glue scripts can be re-usable scripts and avoid having to push a new script with each execution.

Disadvantages

- Must be Python 2.7

- Limited libraries - but can upload your own

- Python script needs to be on S3

- Cannot reuse Matillion connection to run commands on Amazon Redshift (Database Cursor)

- Requires AWS Glue which has a cost implication

Conclusion

In general, we recommend avoiding writing Python scripts in Matillion where possible and try to use components to push the processing down to the target data warehouse using an ELT approach. However, there may be circumstances where this isn’t possible due to the data used or the nature of the Transformation.

In those circumstances, before developing Python scripts within Matillion we recommend that you carefully consider what the script will do and consider running Python scripts which require considerable resources remotely. For a large XML file, the Python script above can take up to 10 mins to run. Having this run on the Matillion server would affect the CPU and memory available to run the Matillion jobs and could also have an adverse effect for Matillion users. Using the remote options discussed ensures the script can be initiated from Matillion but also run in isolation. The result of the script can easily be fed back to Matillion. There are, however, some cases where it is best to run the script directly within Matillion, for example, any Jython code.

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: