Productivity Benefits

Alleviate the pressure on data engineering

resources with a multi-tooled platform that’s accessible to a wider range of skills

Remove bottlenecks for new projects

by making complex data transformation easier, faster or more self-service.

Improve processing speeds

with efficient parallel processing to handle changes across multiple jobs at once.

Remove duplicated efforts

through GitHub integration and automatic versioning.

Transform data with Matillion

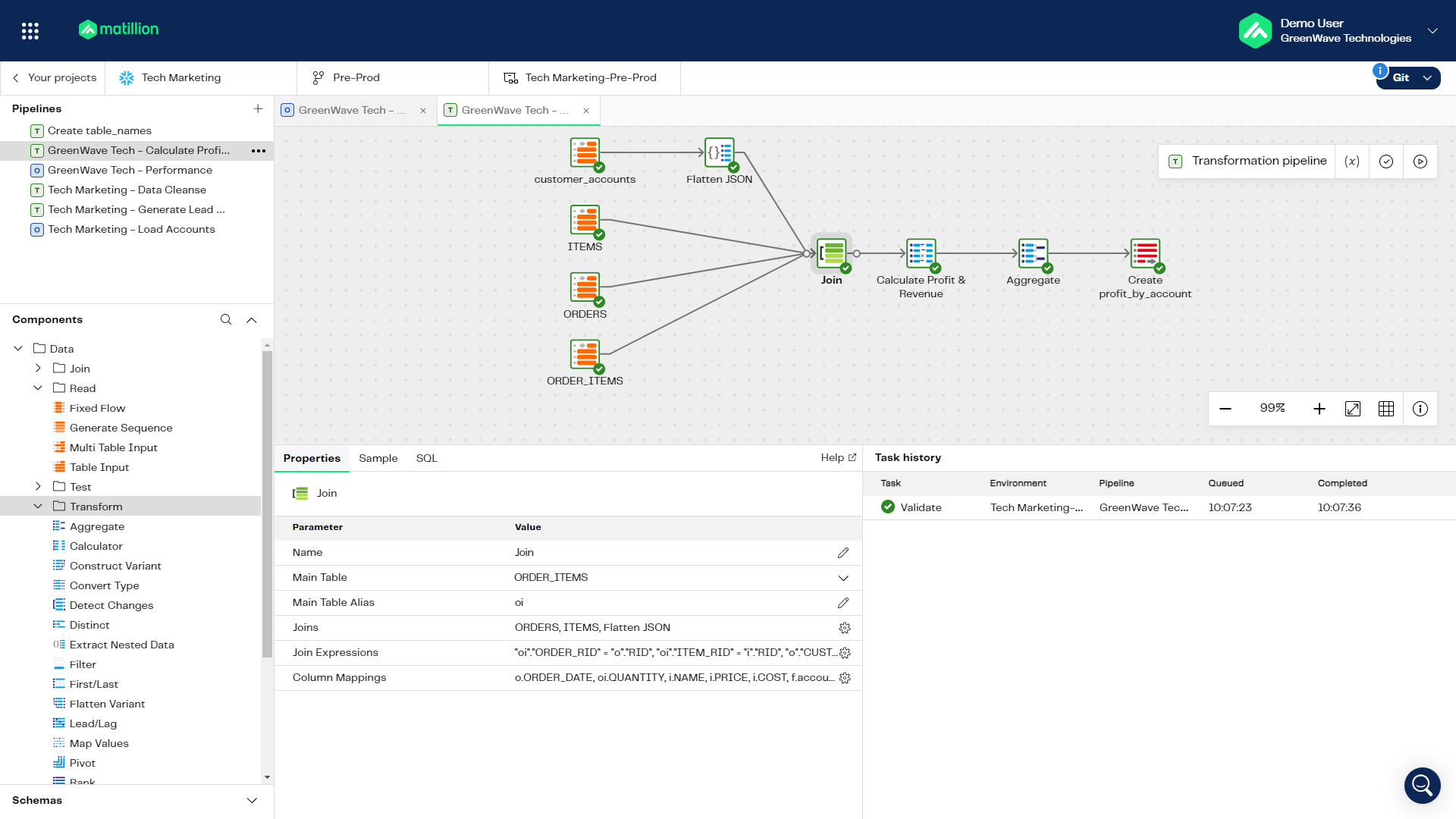

Perform complex data transformation with or without code

Use an intuitive visual designer to perform complex data loading, transformations and orchestrations with low or no code.

Harness our component library to speed up data delivery

Manipulate data with pre-built transformations for staging, models, REST connector, orchestration and data governance.

Use data sampling to explore and analyze data faster

Focus validation on a smaller dataset before scaling up to accelerate prototyping and development.

Test and diagnose quickly

Perform quick testing and diagnosis with real-time success and failure indicators, and a task details pane for debugging.

Create custom transformations with our high-code SQL IDE

A high-code alternative for any use case. Save time by preempting mistakes - Matillion automatically check all SQL statements for you.

Collaborate faster through Git integration

Use all features of GitHub to push transformations throughout your data products and manage version control from within Matillion.

Share developments through the Matillion Community

Use a vast library of shared pipelines to import common orchestrations and transformations.

Import dbt models

Embed dbt pipelines directly within Matillion to streamline and manage all transformation workflows in one place.

Design low and high-code transformation pipelines

Stop maintaining hard-coded pipelines and use Matillion’s intuitive code-optional designer to build the transformations you need, faster.

An intuitive and fast drag & drop UI

Use Matillion’s visual orchestrator to compose your transformation pipelines.

Keep it simple, or make it ambitious

Leverage our low-code/no-code interface or use more complex components, like dbt or Python, when necessary.

Track transformations more easily

Automatically generate documentation for your transformation process.

Debug and test in real-time

Real-time success and failure indicators are shown while you build your transformation.

Collaborate faster and give your data team the power to be more productive

Use the Matillion Hub to improve collaboration and run, streamline and manage all transformation activities.

-

GIT at the heart of everything

Leverage shared jobs and versioning to enable the entire team to contribute.

-

Scale teams with ease

Benefit from advanced user and rights management to ensure governance and control.

-

Enrich and cleanse modern and traditional datasets

Transform even complex new-world datasets such as JSON and AVRO.

Cloud-native engine built for the speed of light

A truly cloud-native approach to data movement and transformation. Our unique stateless microservices agents can run in full SaaS or hybrid-SaaS mode. You can let massive amounts of agents work in parallel to process faster, or use fewer agents for a longer time if you want to take things slower.

Simple, scalable pricing.

Pay-as-you-go pricing that is metered by agents running per hour, giving you the flexibility to run tasks faster with more agents, or slower using fewer agents, while paying the same.

Use pre-built data connectors or create your own with just a few clicks

Learn More

Building that single source of truth is only possible if you bring the right data, from the right sources, and have the confidence that this data is 100% quality-rich.Pavan Yerra Senior Director, Digital and Data Products, Loyalty Tech and Conversational AI | Western Union Learn more

Featured Resources

Private, unified, and clean – Best practices for transforming customer data

Matillion Data Builder SeriesThis is the second instalment of our ...

BlogIntroducing Matillion Integration for dbt

Matillion’s core value is our obsession with customer ...

Success StoryAutodesk

With Matillion ETL, Autodesk vastly improved the velocity and quality of their enterprise data by retiring legacy technology.