- Blog

- 06.03.2019

- Data Fundamentals

Improving Python Efficiency in Matillion: Generating Python File

In our blog “Improving Python Efficiency in Matillion: Offload Large Python Scripts”, we talked about running Python scripts which are better run remotely using SSH, AWS Lambda or AWS Glue. Some of these options require that the Python to run is available as a file on the Matillion server. However, the user may wish to write the file from within Matillion. Here we discuss two options for doing this.

Python Script Component

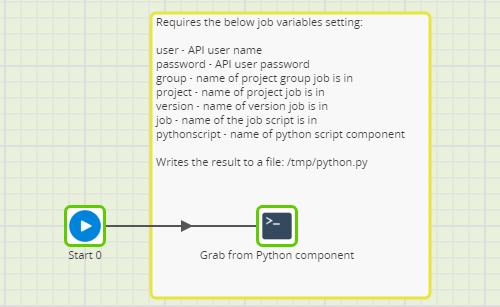

The natural place to write the Python code is using the Python Script component. Here you can take advantage of the color coding of the code written and also use this approach to convert Python scripts already written into files to be run externally. The approach uses a Bash script component to export the job with the Python script using the Matillion API, parse out the relevant Python and write the results to a file in /tmp. It requires a number of inputs to make the API call and identify the correct script.

When the script has run it will either say it has successfully created the file or throw an error saying the API call was unsuccessful and why or throw an error saying the name of the Python script component was incorrect. Please note all variables are case sensitive.

For more details on the job and for examples to download please see here.

Bash Component

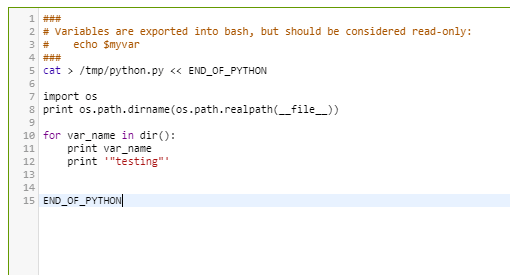

A different approach is to write the Python code directly into a Bash Script component and use this to generate a file. This can be done with a heredoc. An example is in the screenshot:

The advantage of this approach is that it prevents the need for the API call and is also faster to create the file. It also means the users aren’t tempted to “Run” the code from within Matillion.

Conclusion

In general, we recommend avoiding writing Python scripts in Matillion where possible and try to use components to push the processing down to the target data warehouse using an ELT approach. However, there may be circumstances where this isn’t possible due to the data used or the nature of the Transformation.

In those circumstances, we recommend the Python script is run remotely. We recommend creating the Python script as a file on the Matillion server and using one of the approaches discussed here to run it remotely.

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: