- Blog

- 02.21.2018

- Data Fundamentals

Using the Google Sheets Query Component in Matillion ETL for Snowflake

Matillion uses the Extract-Load-Transform (ELT) approach to delivering quick results for a wide range of data processing purposes: everything from customer behaviour analytics, financial analysis, and even reducing the cost of synthesising DNA.

The Google Sheets Query component in Matillion ETL for Snowflake presents an easy-to-use graphical interface, enabling you to pull data from your spreadsheets stored on Google Drive directly into Snowflake. Customers are using this to pull user maintained data from their Google Sheets into Snowflake, to enhance other data from different sources.

The connector is completely self-contained: no additional software installation is required. It’s within the scope of an ordinary Matillion license, so there is no additional cost for using the features.

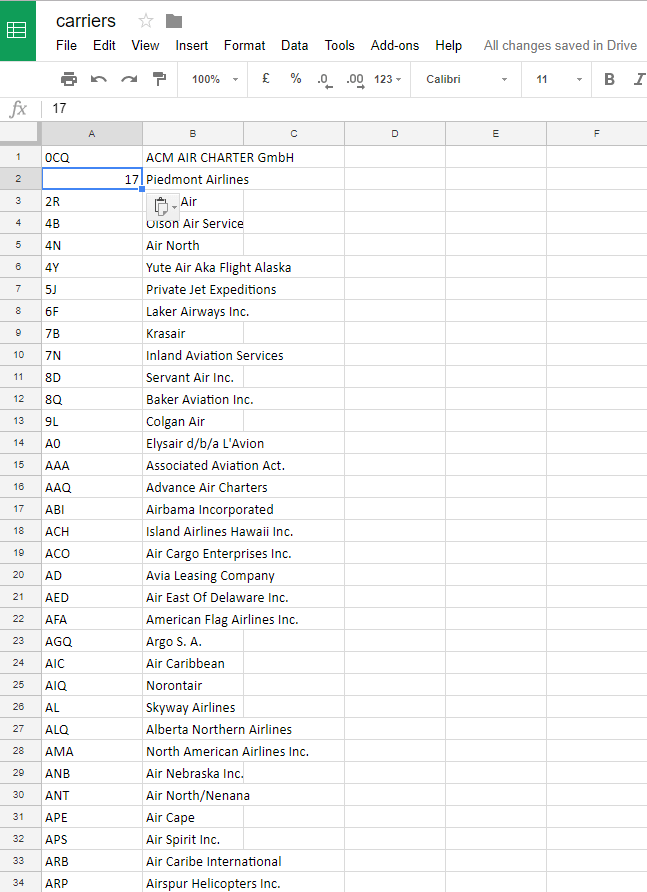

We are going to pull some data from a carriers spreadsheet which we set up on our Google Drive:

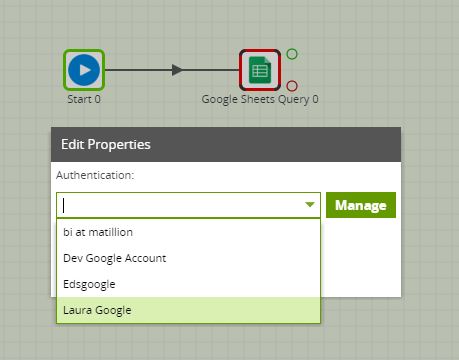

Authentication

The first step in configuring the Google Sheets Query component is to authenticate Matillion to access the Google Sheets file on Google Drive. You can do this via OAuth. Find further details on how to configure OAuth here.

Once set up select the OAuth in the Authentication Drop down:

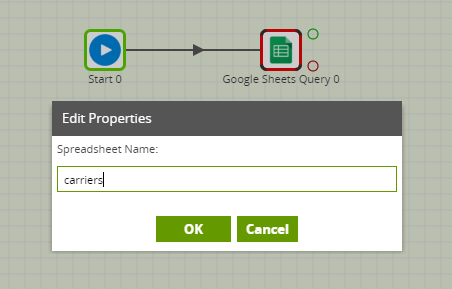

Spreadsheet Name

This is the name of the file to pull data from. This must exactly match the name from Google Drive.

Within Edit Properties there is the option to specify whether the top of row of data should be the deader. This will allow you to create column names for the new table. Also you can specify the Cell range to limit the data you will pull through when you run the component.

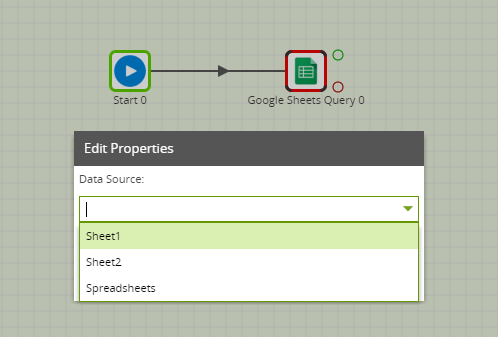

Data Source

Next you can choose the data you want to load into Snowflake from the Data Source drop down. This is a list of the sheets in the file and also details of the spreadsheet:

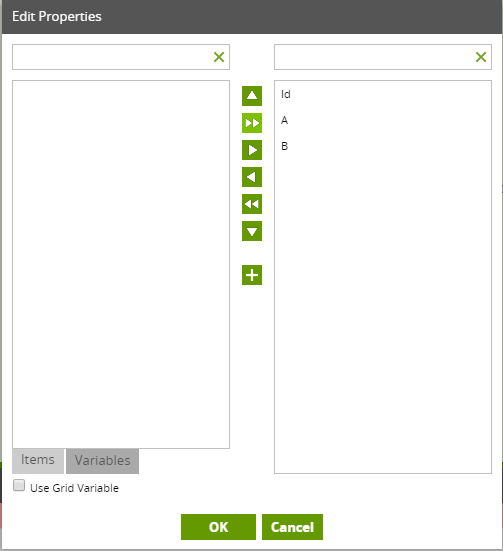

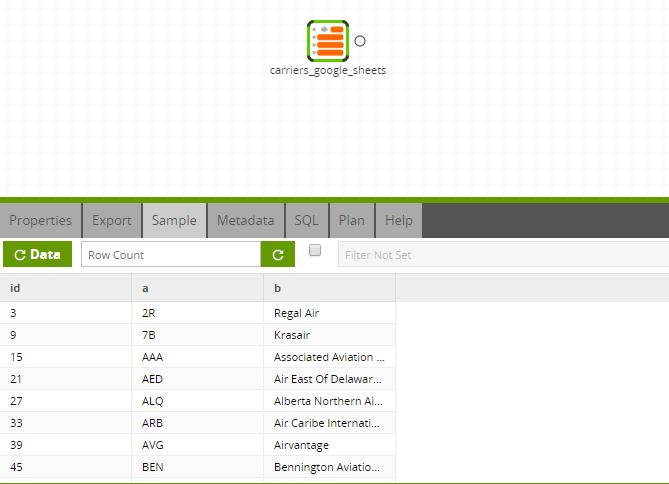

After choosing the data source, the next step is to choose the required fields from the spreadsheet in the Data Selection. If headers are available, this will become the header names. Otherwise, these will be the column letters. An ‘Id’ column is also available to be selected. This will bring through the row number. Your data source configurations will form the new table which is created in Snowflake.

Data Source Filter

You can add a filter to the data you are bringing through into Snowflake using the Data Source Filter.

Connection Options

The driver supports some additional parameters you may want to explore. However, none of the Connection Options are mandatory and the Google Sheets driver usually gives you sensible defaults. Should you wish to explore your Connection Options, further details on the options are available here.

Running the Google Sheets Query Component in Matillion ETL for Snowflake

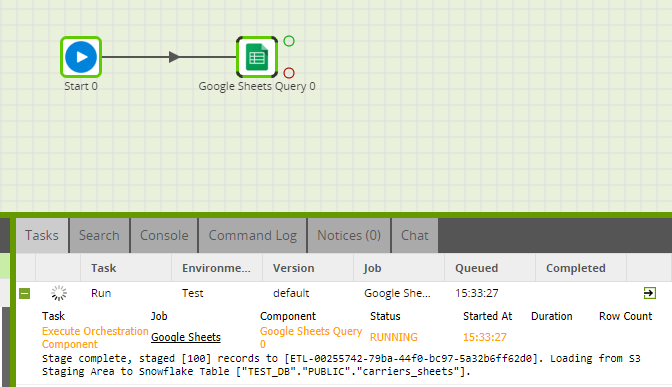

Before you can run the component, you need to name the Target Table. This is the name of the new table you are creating to write the data into in Snowflake. Finally you need to specify a S3 Staging Area. This is a S3 bucket which will temporarily store the results of the query before the data is loaded into Snowflake.

This component also has a Limit property which you can use to force an upper limit on the number of records returned. We recommend using either a limit or a filter to reduce the number of rows returned and to improve the speed of your job.

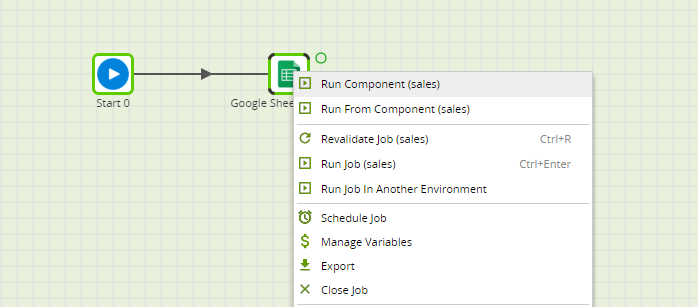

You can run the Orchestration job, either manually or using the Scheduler, to query your data and bring it into Snowflake.

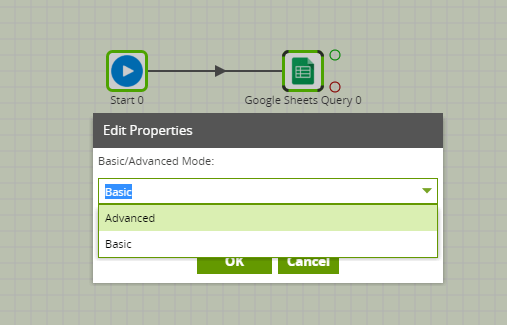

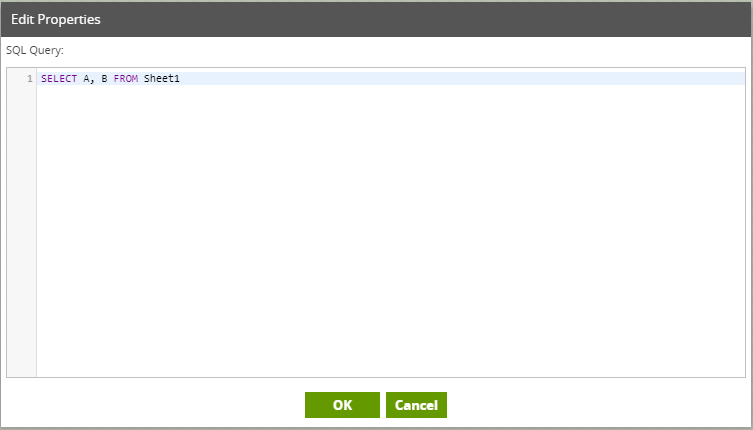

Advanced mode

The Google Sheets Query component offers an “Advanced” mode instead of the default “Basic” mode. In Advanced mode, you can write a SQL-like query over all the available fields in the data model. This is automatically translated into the correct API calls to retrieve the data requested.

Transforming the Data

Once you have brought through the required data from the Spreadsheet into Snowflake, you can use it in a Transformation job, perhaps to enhance existing data:

In this way, you can build out the rest of your downstream transformations and analysis, taking advantage of Snowflake’s power and scalability.

Useful Links

Google Sheets Query Component documentation

Component Data Model

Google 3rd Party OAuth Setup

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: