- Blog

- 08.06.2020

How to Create Your Own Connector in Matillion ETL

Centralizing all of your business-critical data into your cloud data warehouse has never been easier with the new Create Your Own Connector feature in Matillion ETL. This feature comprises a new API Extract wizard profile builder that will guide you through easily creating any custom connectors. While our current API Query functionality transforms data to some extent to get it into a tabular format, the API Extract functionality instead loads raw data from your source system. (For more information on the difference between Query and Extract, read this blog)

API Extract: Get data now, use later

API Extract is especially helpful for those users who just want to land the data somewhere, without thinking yet about data mapping and transformation. Data lake users are a perfect example. All of the data warehouses we support can handle semi-structured data. Matillion ETL provides a number of transformation components to flatten and unnest semi-structured data, making the API Extract functionality a quick and easy way to load data for later use in downstream analytics.

Let’s look at how simple and quick it is to build your own custom connectors. In this example, we will connect to the public data source for SpaceX History data.

Building an API Extract profile

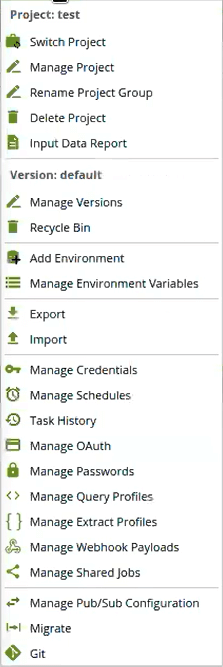

First, you will need to build your API Extract profile. Under the Project Menu you will find “Manage Extract Profiles” in the same place you’d find the renamed “Manage Query Profiles” to build API Query Profiles.

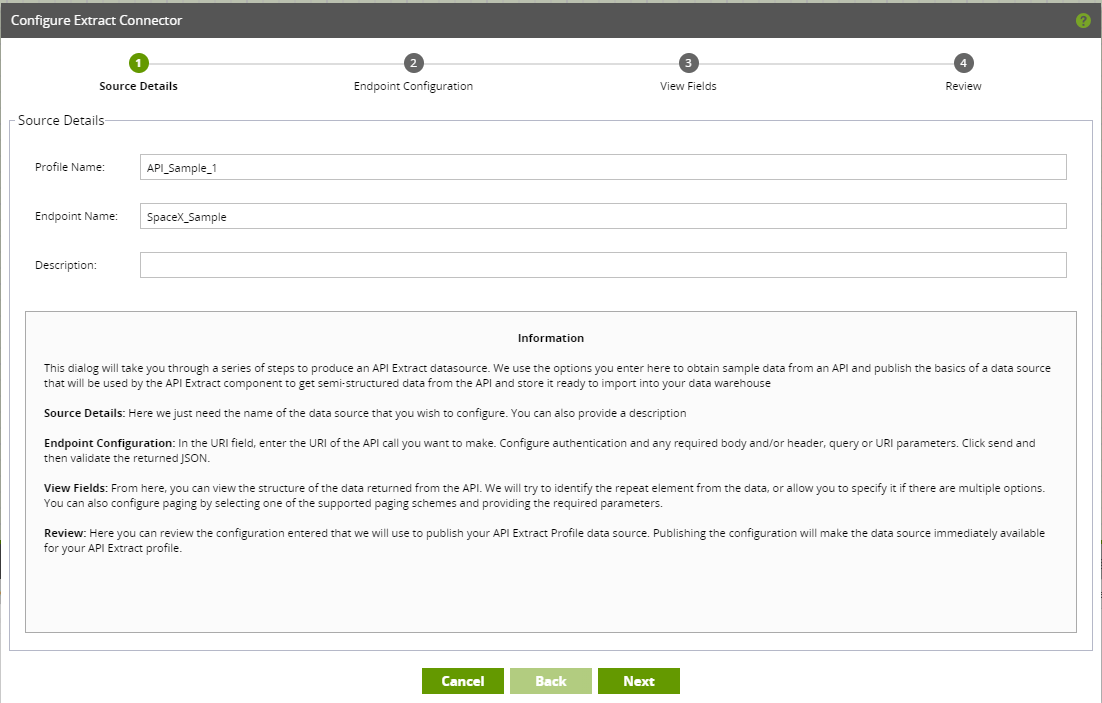

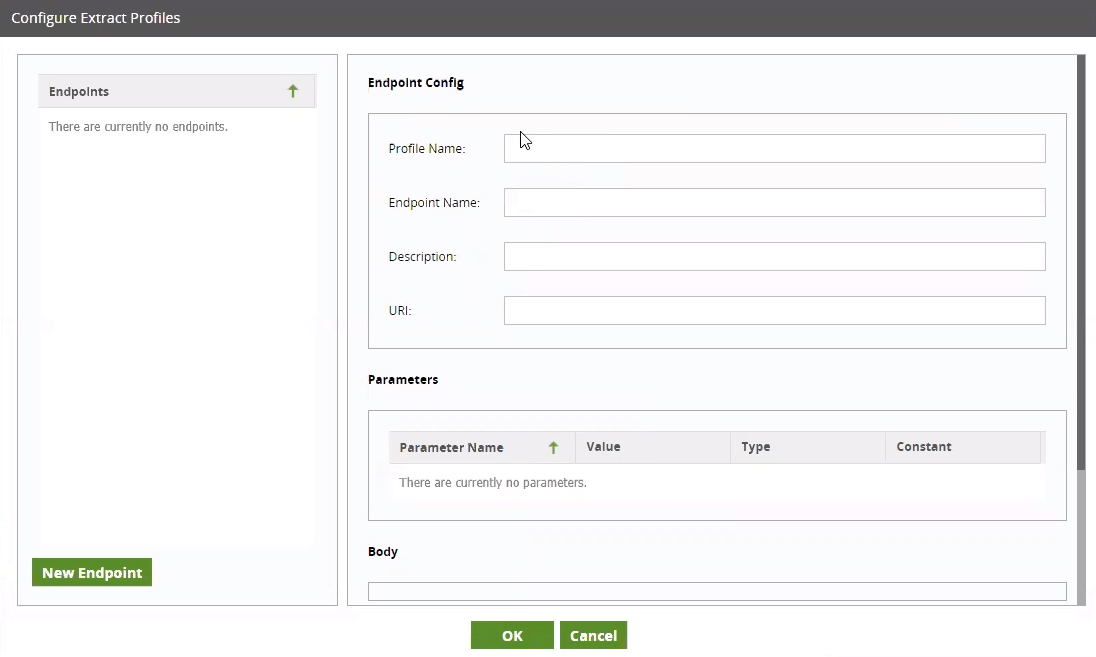

Let’s get started by adding our own extract profile, by clicking on the ‘+’ icon in the bottom left. First we need to provide a name for our new API Extract Profile. We will call ours API_Sample_1 and click ‘Okay’. This creates the profile, which we can now go and configure by selecting the cog next to the new Extract Profile we have just added. We now want to build a “New Endpoint” for our Profile.

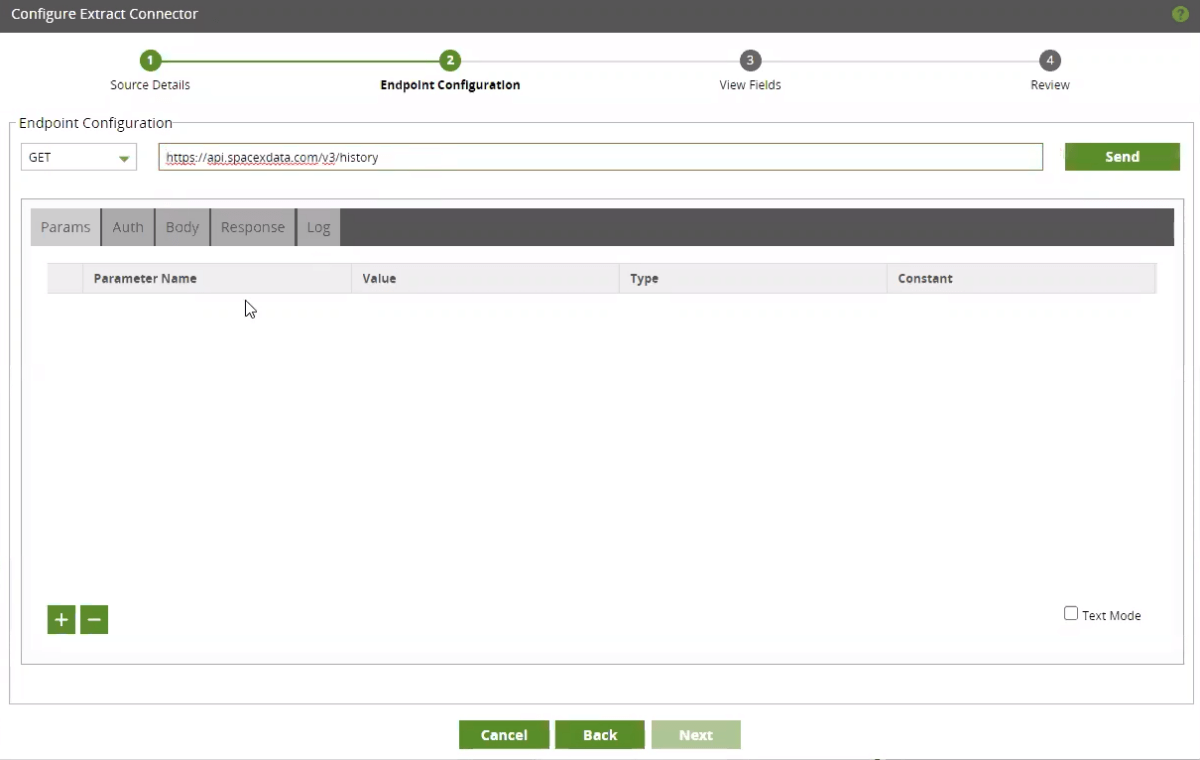

Clicking this opens the wizard, which will guide us through building the Profile. Here is where we can supply the URI to our endpoint. For this example, we will use the link provided to ‘GET’ the historical SpaceX data. After clicking ‘Send’ we are testing the connection and, almost instantaneously, we get a response with the data found in the data source .

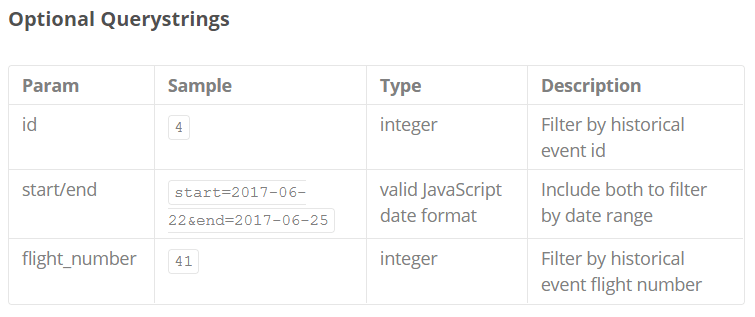

Just under the URI, there are a number of tabs to provide additional configurations. Within our History SpaceX data, there are some sample Parameters:

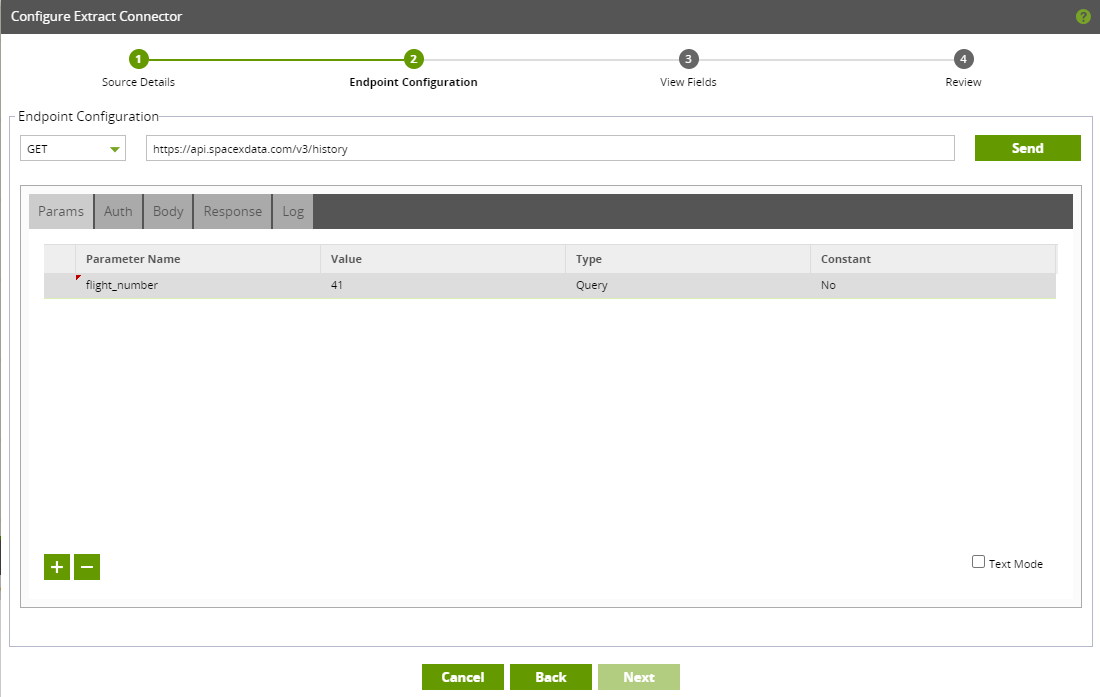

For this example we will set a parameter for flight_number. In Matillion ETL, that parameter looks like this:

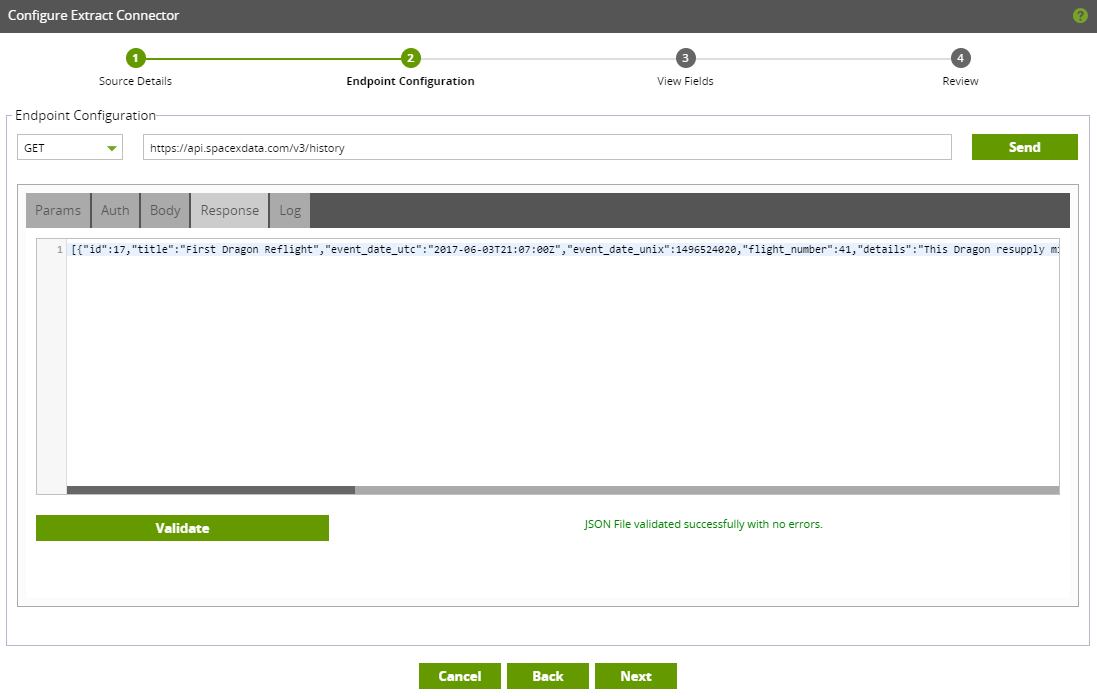

If we click ‘Send’ and check the ‘Response’ tab, we can see a valid response and one record for when flight_number equals 41.

By being able to test our configurations as we go, we are able to amend our Profile settings and test the response to ensure that we are accessing the data we expect.This, in turn, builds confidence that once we run an Orchestration Job with this Profile, it will load the data we want into our cloud data warehouse.

Additionally, we could also:

- Provide ‘Auth’ details if required

- Paste in a sample ‘Body’

- Or check the ‘Log’

Check out our support documentation for more information on the API Extract Profile wizard.

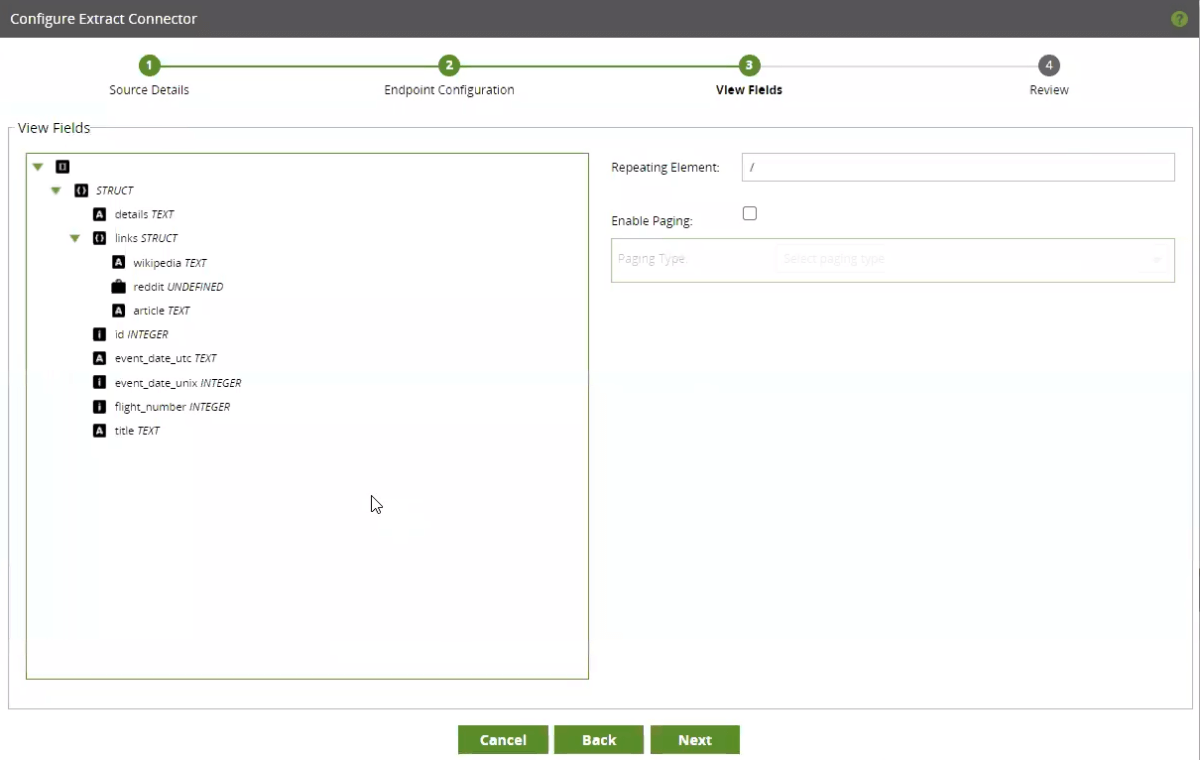

Once we are happy with the settings, we can click ‘Next’ to continue creating our profile. We now get a view of the metadata in the data source. Here, if we have more than one array at the same level, we can choose a repeating element. In this case, there is a single array so the profile does not need a repeating element set. At this stage, we could also set the pagination strategy if needed.

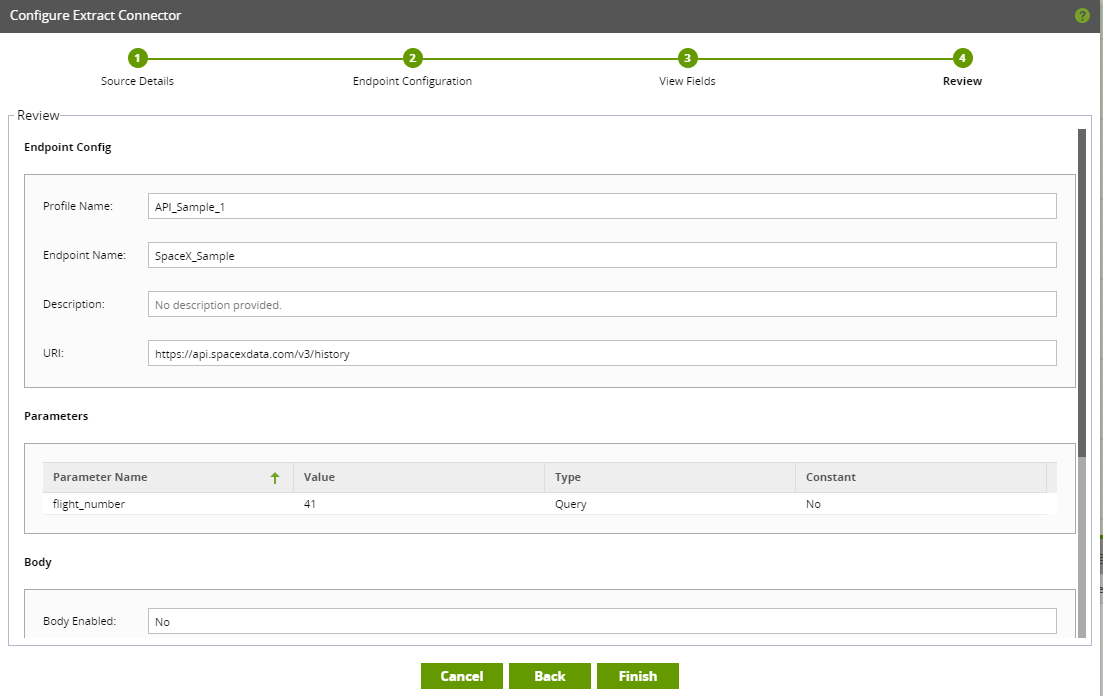

The final step of the wizard is a ‘Review’ screen so you can check your Extract Profile configurations. If the ‘Review’ screen looks correct, we can click on the ‘Finish’ button and then ‘OK’. We should now have a new and configured Extract Profile ready for use.

Loading data to your cloud data warehouse using the API Extract component

Now that our Extract API Profile has been created, we are ready to load our data. Back on the main Matillion ETL screen, right-click on the left-hand panel and select “Add Orchestration Job,” which we will name Load_SpaceX.

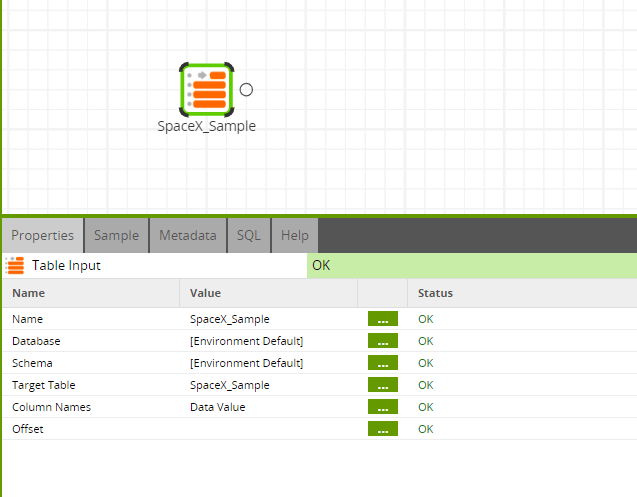

Once the job has opened, let’s look for the API Extract component and connect it to the Start component. We first need to change the API Parameter to use the profile we just created – ‘API_Sample_1’. The data source will be ‘SpaceX_Sample’. We now need to give a name to our target table where our data will be stored within our data warehouse. Let’s call it ‘SpaceX_Sample’ to match our endpoint. Lastly, we need to choose a staging area. The component is valid when the borders turn green, so we can now run the job. Remember, this component will load the raw JSON data.

Transforming your semi-structured data in Matillion ETL for advanced analytics

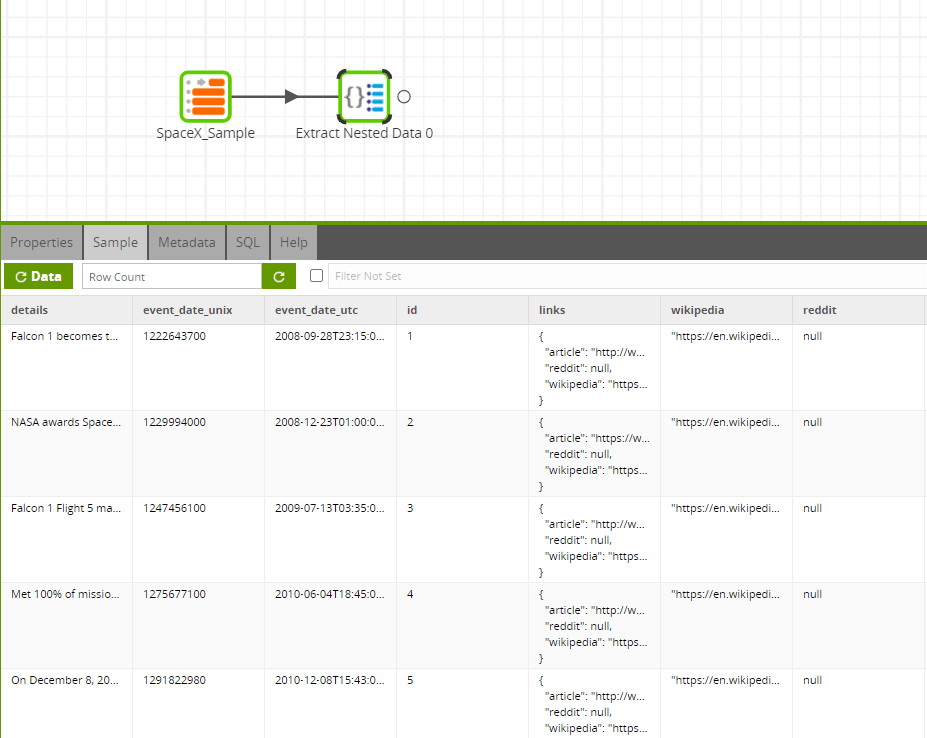

This job should only take a few seconds to run. Once done, we can create a new Transformation Job called ‘Transform_SpaceX’. We will drag in a Table Input component and use it to find our ‘SpaceX_Sample’ table. Let’s also bring across all the columns in the Column Name parameter.

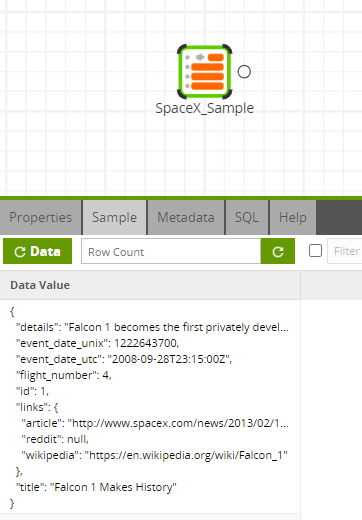

We can verify that everything has come across correctly through the metadata tab and the Sample tab. When we sample the data, we can see the semi-structured data not yet flattened.

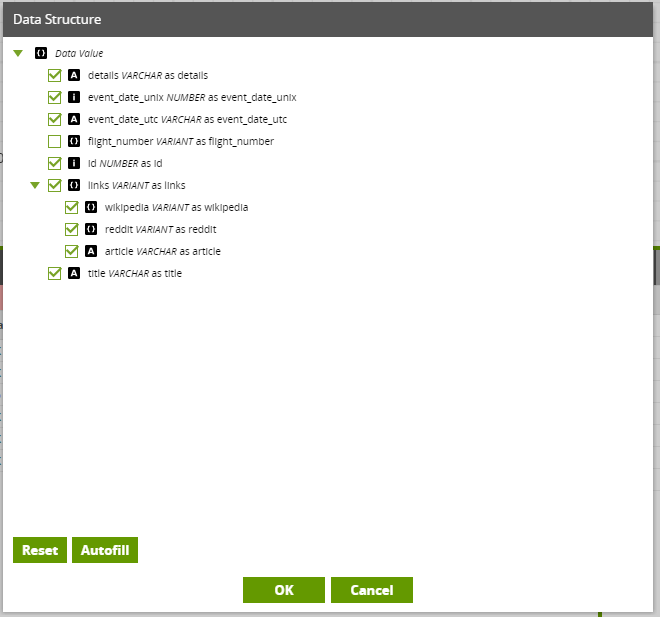

To make this data analytics ready, we may need to extract this nested data. To do this, we can use the Extract Nested Data component. Under Data Structure, we can select ‘Autofill’ to display the schema. This will auto-select fields or we can manually select or deselect fields as required for our use case. In this instance we will select all fields. Back in the Properties tab, we will select ‘No’ for the property called ‘Include Input Column,’ which will remove the original structure from our table.

Now when we sample the data we can see that it is now flattened out into a more readable, tabular form and it is now much easier to manipulate and consume.

As you can see, you can use Create Your Own Connector to bring data in from your restful API source systems, and then prepare that data so it is ready for downstream analytics within minutes. Try it out, let us know what you have been able to connect to and provide your feedback to the Matillion Community.

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: