- Blog

- 05.29.2020

Managing Type 2 Slowly Changing Dimensions in Matillion for Snowflake

In this partner guest blog, Joe Caparula from Pandata Group explains how to manage Type 2 Slowly Changing Dimensions in Snowflake with the Detect Changes component.

Attributes in data warehouse dimension tables change over time, and you may want to retain the history of those changes as separate dimension records. This is known as a Type 2 Slowly Changing Dimension (SCD). Changed records are not overwritten but rather flagged as “Inactive” with beginning and ending effective dates, while the updated values are inserted as new records. Because each record still has a distinct key, the “old” fact records still join to the old dimension value, creating a historical trail of activity.

Using the Detect Changes component to manage Type 2 SCDs

Matillion ETL for Snowflake can manage Type 2 SCDs using a Detect Changes component as its central mechanism for determining the updates and inserts for changed records. Detect Changes compares an incoming data set to a target, then, using a list of comparison columns, determines if the records are Identical, Changed, New, or Deleted. These appear as an Indicator field in the Detect Changes output. Records flagged as New or Changed are filtered, and a subsequent transactional flow manages Insert actions (New and Changed) and Update actions (Changed only) based on those indicators.

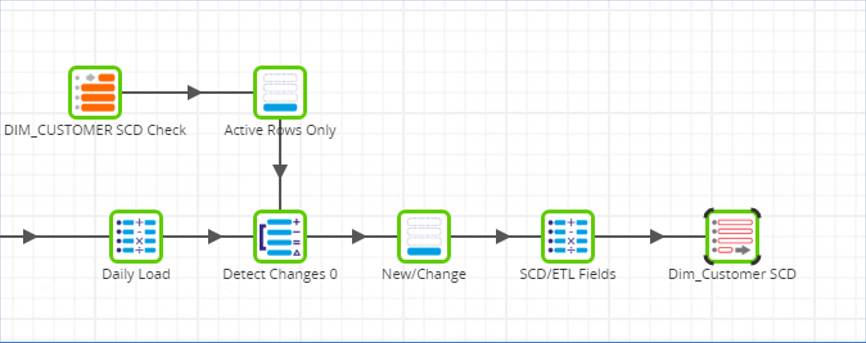

In the example below, a daily load of customer data is compared to the existing Customer dimension table using Detect Changes (note that only active dimension records are being considered).

The Detect Changes component matches on the natural key (in this case, Customer ID) and identifies columns that we want to track SCD on (e.g. region, customer_type, etc.). The component adds an Identifier column (N = New, C = Changed, D = Deleted, I = Identical) based on the outcome of the column comparison. We are only interested in New or Changed records, so we filter on those. The SCD/ETL Fields component adds derived values for Active Flags, Effective Date (essentially today’s date), Open End Date (e.g. 12/31/2099), etc. that will be used to fill system-generated columns during the insert/update. Finally, a transient table is rewritten to act as a source for the next step.

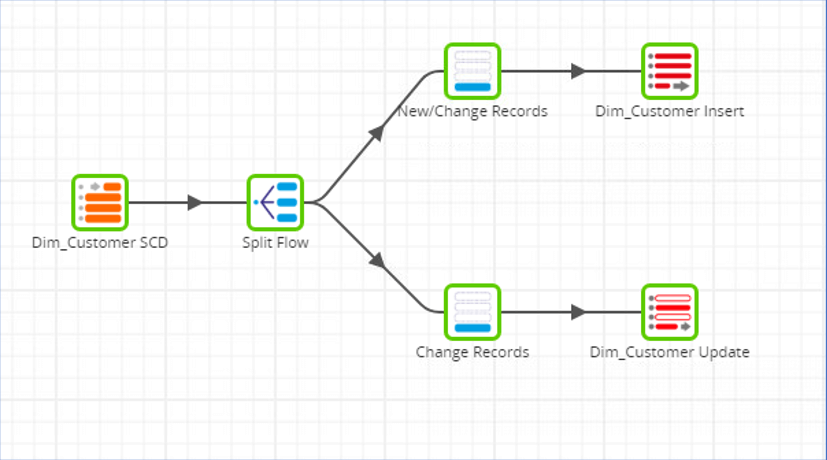

Inserts and updates to the dimension

In the Insert/Update transformation that follows, the transient table that was populated in the previous job is used as a source to do the actual inserts and updates to the dimension. Records flagged as New or Changed will be inserted into the dimension table with an Active Flag of ‘Y’ and today’s date as the Effective Date and the Open End Date as the End Date. The updates only occur for changed records (identifying these “old” records by the dimension key) . . . these will get an Active Flag of ‘N’ and an End Date of today’s date.

Things to keep in mind

Note that Matillion requires that the actions be split between the Detect Changes activity and the Insert/Update activity (via a transient table) to avoid the mistiming of inserts relative to updates.

Also keep in mind that you can use Detect Changes to flag for updates or inserts without using Type 2 SCD (this would be known as Type 1) . . . simply remove the special SCD fields such as Active Flag and change the Insert filter to New only. As long as you have a reliable natural and dimensional key you can update any or all fields in your dimension table. Detect Changes is a very versatile component that enables total management of the dimensional changes in your Snowflake data warehouse.

About the author

Joe Caparula is a Senior Consultant with Pandata Group with extensive experience across several data integration platforms. His most recent projects include architecting and developing modern cloud data platforms with Matillion and Snowflake.

Learn more about Matillion ETL for Snowflake

Want to learn how Matillion ETL for Snowflake can help your organization with data in the cloud? Request a demo.

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: