- Blog

- 04.26.2022

- Data Fundamentals

Connecting Matillion ETL to a Provisioned Amazon Redshift Cluster: A Guide

In Matillion ETL, the metadata for connecting to Amazon Redshift is held in an artifact known as an Environment. Matillion ETL Environments can also hold additional information that is used during data extraction and loading.

Prerequisites for connecting to a provisioned Amazon Redshift cluster

The prerequisites for connecting Matillion ETL to a provisioned Amazon Redshift cluster are:

- Access to Matillion ETL for Amazon Redshift

- Permission to create and edit Environments in Matillion ETL

- Your Matillion ETL instance has network access to your provisioned Amazon Redshift cluster

- Your provisioned Amazon Redshift cluster is Available (not paused)

- Amazon Redshift credentials:

- The cluster Endpoint

- Amazon Redshift username and password

Amazon Redshift provisioned cluster endpoints

Every provisioned Amazon Redshift cluster has a network address known as an Endpoint that is used for all communication.

Amazon Redshift provisioned cluster endpoints are made up of a hostname, a port number and a database name. They look like this:

your-cluster-identifier.guid.region-identifier.redshift.amazonaws.com:port/database-nameTo find your own Amazon Redshift provisioned cluster endpoint, start at the provisioned clusters dashboard in your Amazon Redshift console.

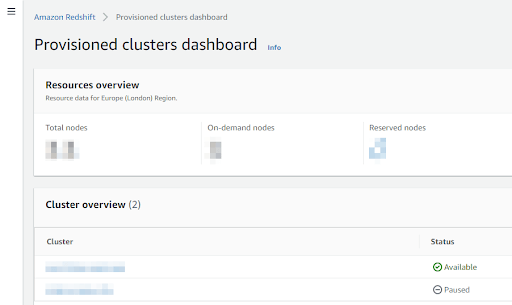

The screenshot below shows two provisioned clusters. One is marked as Available, so you will be able to connect to it from Matillion. The other is marked as Paused, so it will not respond until the cluster has been resumed.

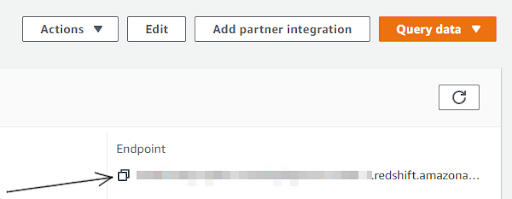

Click into an available cluster to access its details screen. You will find the endpoint listed under the general information panel.

You can copy the entire endpoint string using the button highlighted above. Later in this guide, you will need just the hostname part of the endpoint. For example, if your endpoint is:

cluster1.ecddc61tbkeh.eu-west-2.redshift.amazonaws.com:5439/dbname.. then the hostname part is:

cluster1.ecddc61tbkeh.eu-west-2.redshift.amazonaws.comThe hostname resolves to the IP address of the leader node in the cluster. It might be a public address or a private address, depending on your VPC configuration, and whether you have enabled enhanced VPC routing.

How to connect a Matillion ETL Environment to a provisioned Amazon Redshift cluster

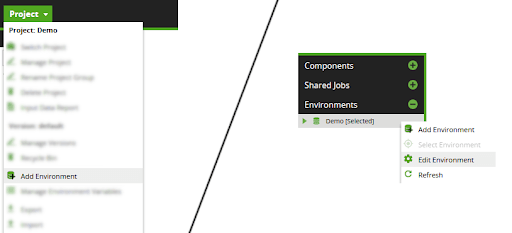

To create or edit a Matillion ETL Environment, start from the main Project menu, or from the context menu in the Environments panel.

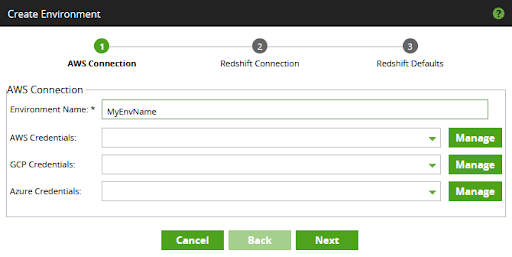

This action will pop up a Create or an Edit Environment window containing three dialogs.

In the first dialog, choose a name for the Environment, and optionally set any AWS, Azure, or GCP credentials that you intend to use.

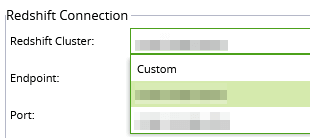

In the second dialog, there are two ways to complete the fields:

- Choose one of your provisioned clusters from the Redshift Cluster dropdown list, and use the auto populated defaults.

- Choose "Custom" as the Redshift Cluster dropdown list, and complete the remaining fields manually

Option 1 is the easier method, but will only be available if your Matillion ETL instance has sufficient IAM Roles and Permissions, and the Amazon Redshift cluster you wish to connect to is in the same AWS account. If that is the case, then you will find the Redshift Cluster dropdown contains a list of all your provisioned cluster identifiers. When you select one of them, the remaining fields on that panel will auto populate. The screenshot below shows how to select a provisioned cluster that way.

If the Redshift Cluster dropdown contains nothing but "Custom", or if the cluster you need is not among those listed, then you will have to choose option 2: complete the remaining fields manually.

To complete the fields in this part of the dialog manually, you will need to know your Amazon Redshift provisioned cluster endpoint, described in the previous section.

For example if your Amazon Redshift provisioned cluster endpoint is

cluster1.ecddc61tbkeh.eu-west-2.redshift.amazonaws.com:5439/dbname

.. then set

- The "Endpoint" to the hostname part:

cluster1.ecddc61tbkeh.eu-west-2.redshift.amazonaws.com

- The Port to the numeric port value: 5439

.. as shown in the screenshot below.

Then set the Amazon Redshift username and its password, using the Matillion password manager.

If you are not using enhanced VPC routing, you should always tick the Enable SSL option at this point to protect data from unauthorized access.

You may also choose to add Advanced Connection Settings to set individual JDBC driver connection parameters. In most cases you will not need to use Advanced Connection Settings.

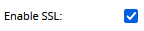

Press Next to enter the third dialog. While you are completing the details, make certain of two things:

- The dropdown lists for the database, default schema, and default bucket all show values that you can select.

- After completing the defaults, you get a success message when you press the Test button

Note that the Default Bucket setting is unrelated to the Redshift Connection settings in the second dialog. You can leave the Default Bucket blank. But if the dropdown does not contain any selectable values, it is likely you will have problems with data loading.

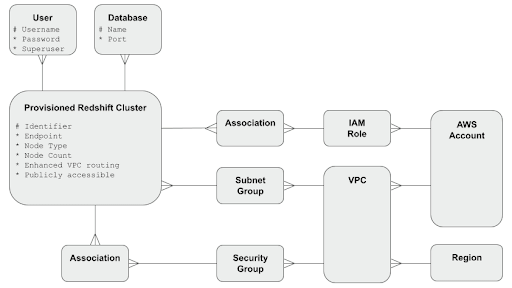

Connectivity metamodel for Matillion ETL and Amazon Redshift

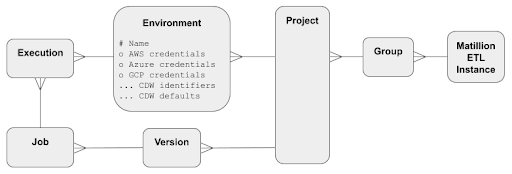

Matillion ETL stores Amazon Redshift connection details inside an Environment object. The Environment contains the Amazon Redshift endpoint and port, plus the user credentials in the form of a username and a managed password.

Environments can optionally also hold additional cloud provider information, in the form of AWS, Azure, and GCP credentials. This information is used by various orchestration components such as the Data Transfer.

Matillion ETL Environments exist within a Project and a Group. Orchestration and Transformation Jobs are versioned within Matillion ETL projects. Jobs always execute within the context of exactly one Environment.

Within an AWS account, you can create provisioned Amazon Redshift clusters. Every cluster exists within a VPC and a subnet group, and can be associated with IAM roles and Security Groups.

- The IAM roles grant permissions on other AWS resources, such as S3 buckets for data loading or for AWS Spectrum.

- The Security Groups govern network access.

Within every provisioned Amazon Redshift cluster you can create Databases and Users. Users with the CREATEUSER privilege are marked as superusers. At least one database and one superuser must always exist.

Once you’ve connected Matillion ETL and your provisioned Amazon Redshift Cluster

You can start to build workflows in Matillion ETL

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: