- Blog

- 06.15.2020

- Data Fundamentals

Shared Jobs in Matillion ETL: Using Variables to Speed Up Development (Part 2 of 2)

This is a two-part blog to walk you through setting up and creating a Shared Job in Matillion ETL using variables. Part 1 walked through the set up process for Job and Grid Variables for use later in a Shared Job. In Part 2, we will look at creating and packaging the Shared Job.

Using Shared Jobs, you can create your own components using the Shared Jobs feature. Shared Jobs bundle entire workflows – Orchestration Jobs (and the Transformation Jobs they link to) – into a custom component. In this way, Matillion ETL can help you save development time when it comes to common workflows and jobs that you will use again and again as it ultimately is a templated job to be reused across your projects.

In this blog, we build on the example in Part 1, to show you how you can create your own Shared Job.

Set up your Matillion job

In Part 1, we created a Transformation Job to filter our warehouse order data from three different tables. Now we want to make our Transformation Job that processes a warehouse table specified in a scalar and filters to values specified in a Grid Variable into a Shared Job. To do that, there are just two more steps we need to do:

- Since it is only possible to package up an Orchestration Job as a Shared Job, we need to make an Orchestration Job that calls our Transformation Job

- Then we can package up our new Orchestration Job into a Matillion Shared Job (which will also bring the Transformation Job into the package)

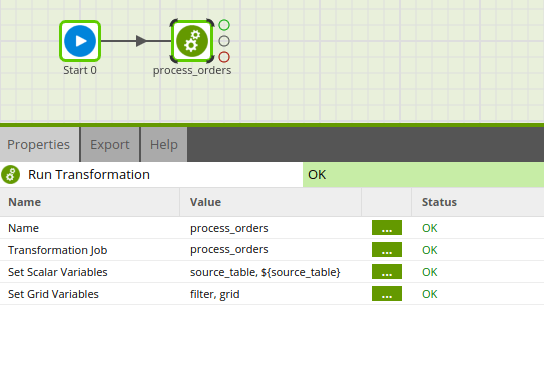

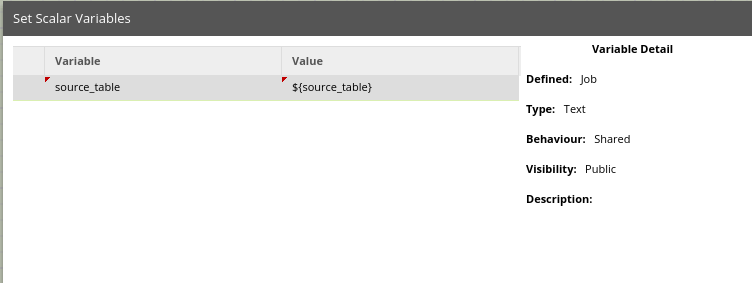

You will need to create the same Job Variable and Grid Variable that exist in the Transformation Job in Orchestration Job. This way, the Shared Job we create accepts the values as parameters, and can pass them to the Transformation Job. We also need to explicitly pass the Job and Grid Variables from this job into the process_orders sub-job. First, we can set the scalar Job Variable to reference our table variable:

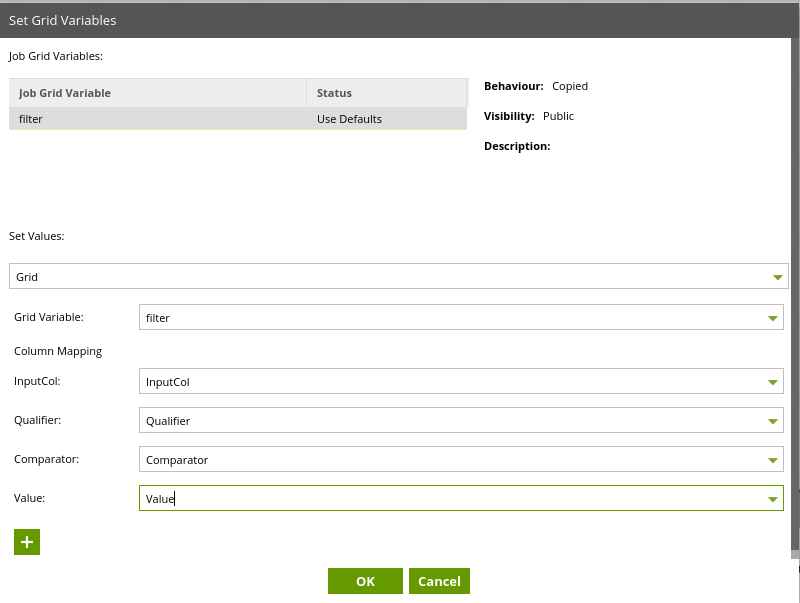

Then, we want to set the Grid Variable to reference our pre-configured Grid Variable that filters on year.

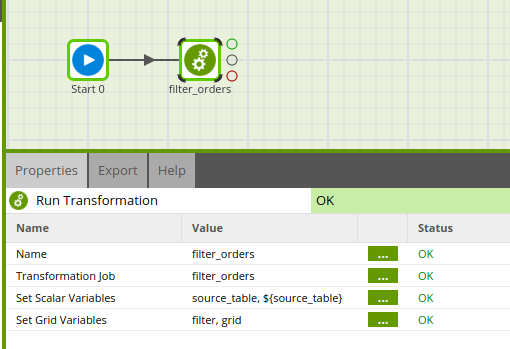

Now our Orchestration Job looks like this:

Generate Your Shared Job

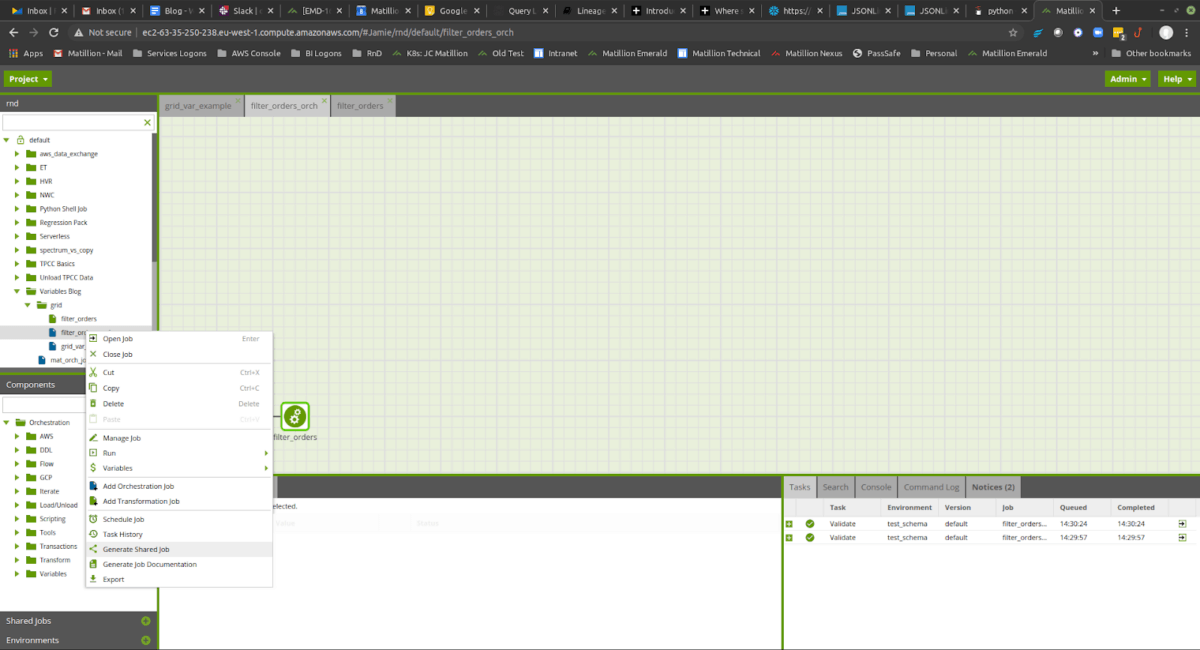

Now we can package the Orchestration Job as a Shared Job, find it in the Object panel, and right click and select “Generate Shared Job”

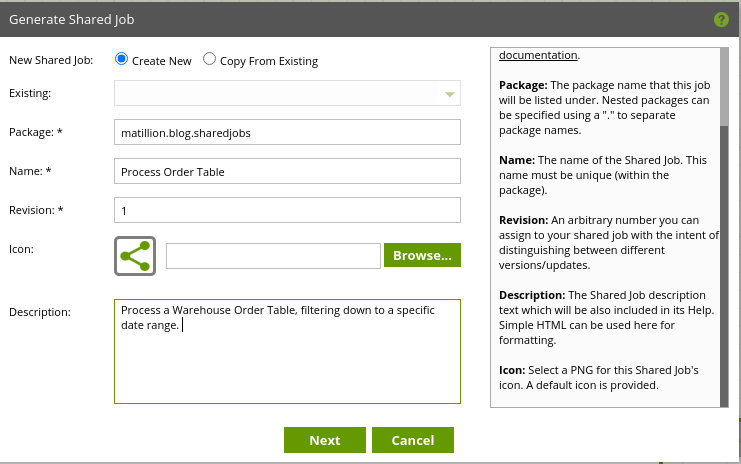

Make sure you are creating a new Shared Job, and add a package name, a Name for the shared job, and a description. You can also add your own icon, but for this example we’re going to use the default

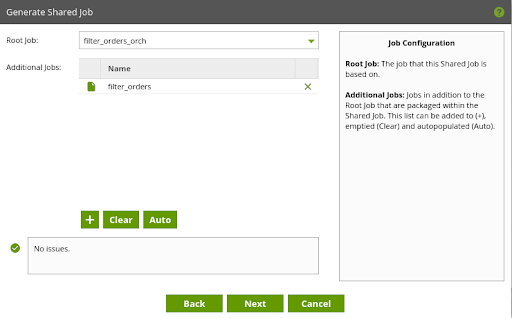

Matillion ETL will automatically determine which other jobs need packaging along with the Orchestration Jobs. You can amend this list if need be, but here Matillion ETL has correctly detected our sub-job Transformation.

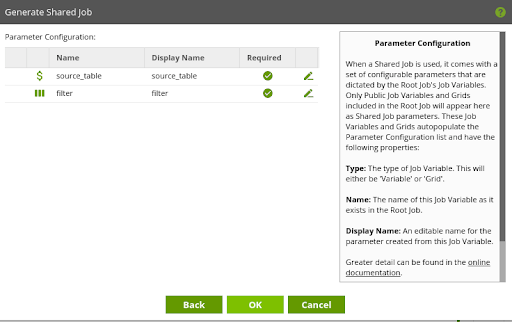

Here we are presented with a list of variables from the Orchestration Job we are packaging – now called Parameters. Parameters are the values that users of the Shared Job will be able to enter, and they directly correspond to the variables in the job.

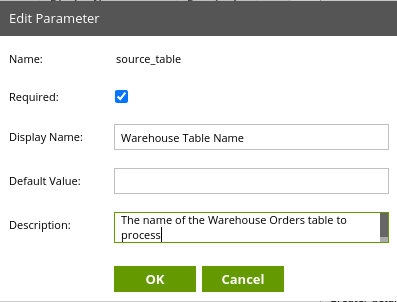

By clicking on the green pencil icon, we can edit our Parameters. Here I have added a more user-friendly name and a description of the Parameter, as well as marking it as ‘Required’ (the Shared Job will fail to validate if a user does not supply Required parameters).

Click Ok to save changes. Then click Ok in the Generate Shared Job box to generate the job.

Using your Shared Job

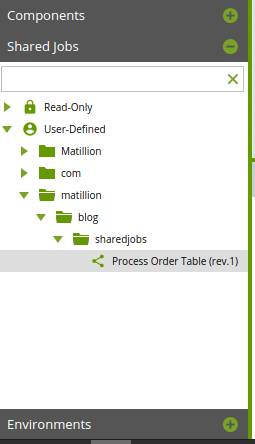

To access your newly created Shared Job, find the Shared Jobs panel on the left side of the Matillion ETL user interface.

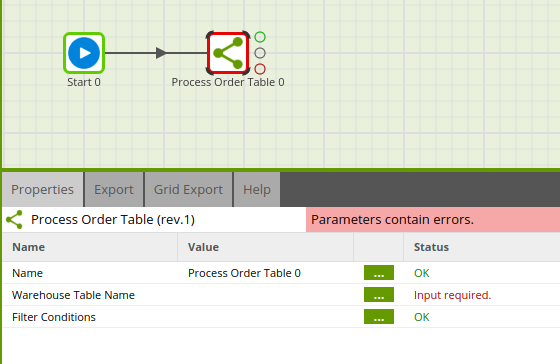

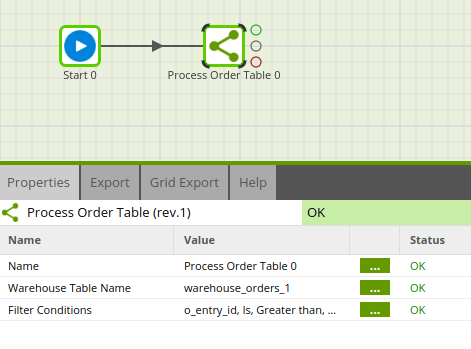

You can then drag your Shared Job onto an Orchestration Job like any other Matillion ETL Orchestration component:

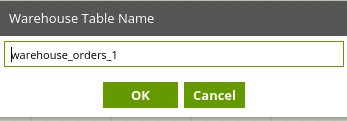

To run the job, we first need to set the properties. We can set the Warehouse Table Name to reference ‘warehouse_orders_1,’ which will validate the component.

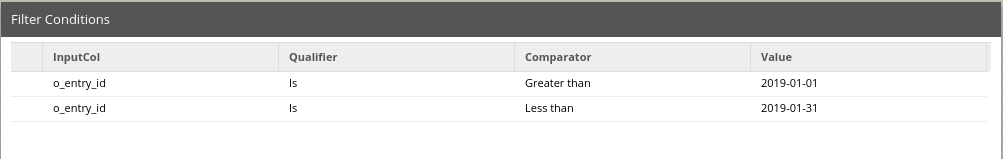

Finally, we check our Filter Conditions:

You have made your own Matillion ETL component!

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: