- Blog

- 06.30.2017

- Data Fundamentals, Dev Dialogues

Using the Matillion Salesforce connector for BigQuery

Matillion uses the Extract-Load-Transform (ELT) approach to delivering quick results for a wide range of data processing purposes: everything from customer behaviour analytics, financial analysis, and even reducing the cost of synthesising DNA.

The Salesforce Query component presents an easy-to-use graphical interface, enabling you to connect to live Salesforce and Force.com. Many of our customers are using this service for example to do event tracking, case and task management. The component allows you to bring the Salesforce data into BigQuery for analysis and integration.

The connector is completely self-contained: no additional software installation is required. It’s within the scope of an ordinary Matillion license, so there is no additional cost for using the features.

Matillion uses the Extract-Load-Transform (ELT) approach to delivering quick results for a wide range of data processing purposes: everything from customer behaviour analytics, financial analysis, and even reducing the cost of synthesising DNA.

The Salesforce Query component presents an easy-to-use graphical interface, enabling you to connect to live Salesforce and Force.com. Many of our customers are using this service for example to do event tracking, case and task management. The component allows you to bring the Salesforce data into BigQuery for analysis and integration.

The connector is completely self-contained: no additional software installation is required. It’s within the scope of an ordinary Matillion license, so there is no additional cost for using the features.

Video

Watch our tutorial video for a demonstration on how to set up and use the Salesforce Query Component in Matillion ETL for Google BigQuery. https://youtu.be/xekP1tG2QpAAuthentication and Authorization

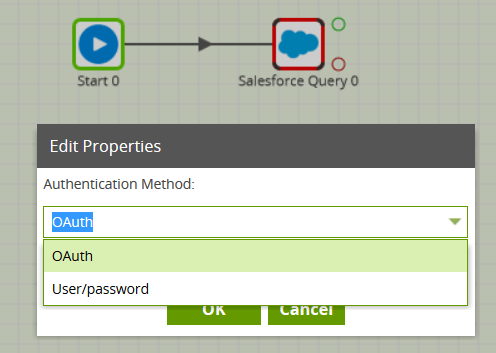

When you create an Orchestration job containing a Salesforce Query component, you’ll find that two authentication methods are available:- Username/Password/Security Token

- OAuth

User/password authentication

To use this option, you will need your Salesforce username and password, plus a Security Token, which can be generated or reset using the Salesforce site.

OAuth authentication

To use this option, you must first go to the Project / Manage OAuth menu, and follow the on-screen instructions. Then, choose the new Salesforce OAuth profile in the Salesforce Query’s Authentication property.

User/password authentication

To use this option, you will need your Salesforce username and password, plus a Security Token, which can be generated or reset using the Salesforce site.

OAuth authentication

To use this option, you must first go to the Project / Manage OAuth menu, and follow the on-screen instructions. Then, choose the new Salesforce OAuth profile in the Salesforce Query’s Authentication property.

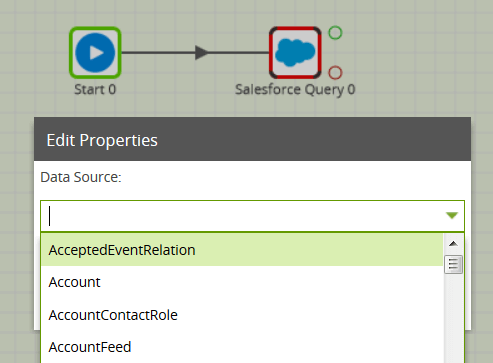

Data Source

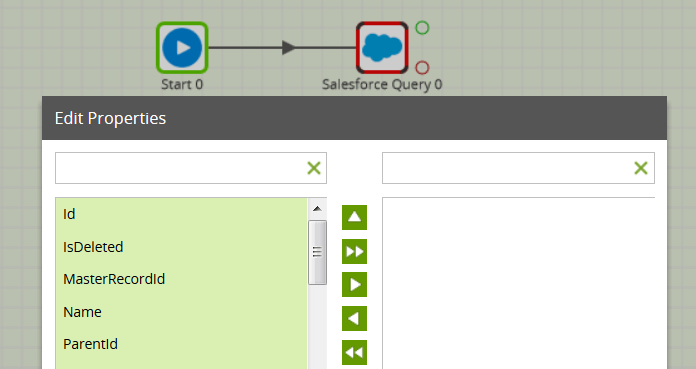

Once security has been configured, you will then be able to choose a Data Source from the dropdown list. The Salesforce Data Model contains nearly 200 tables and views to choose from, in addition to any custom objects if you have created them. Having chosen a Data Source, you can then go to the next property and choose one or more names from the Data Selection dialog. These will form the columns of your BigQuery table.

Having chosen a Data Source, you can then go to the next property and choose one or more names from the Data Selection dialog. These will form the columns of your BigQuery table.

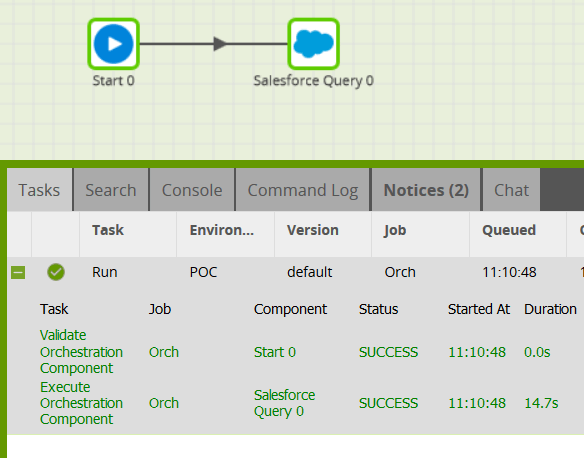

Running the Salesforce Query

The final mandatory properties for this component are the Target Table name and a Cloud Storage Staging Area. The latter is the URL and path of a Google Storage bucket which will be used temporarily to stage the queried data. Remember that this component also has a Limit property, defaulting to 100, which can be used to force an upper limit on the number of records returned. You can run the Orchestration job, either manually or using the Scheduler, to query your data from the Salesforce API, and bring it into Google BigQuery.

Exploring further

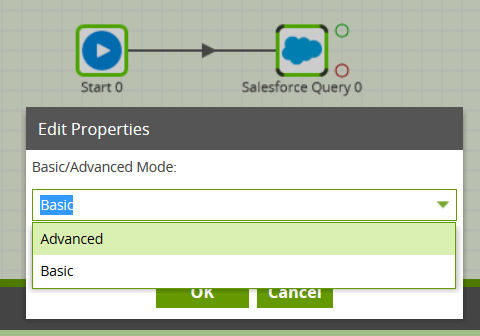

The Salesforce Query component offers an “Advanced” mode instead of the default “Basic” mode. In Advanced mode, you can write a SQL-like query over all the available fields in the data model. This is automatically translated into one or more Salesforce API calls on your behalf. Once you have finished bringing all the necessary data from Salesforce into Google BigQuery, you can then use it in a Transformation job.

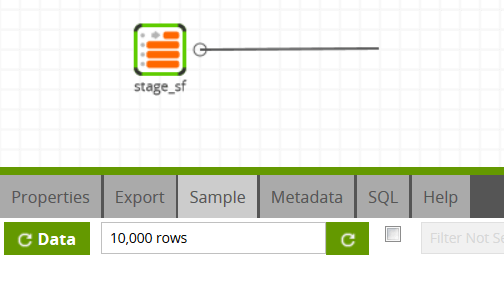

Once you have finished bringing all the necessary data from Salesforce into Google BigQuery, you can then use it in a Transformation job.

In this way, you can build out the rest of your downstream transformations and analysis, taking advantage of BigQuery’s power and scalability.

In this way, you can build out the rest of your downstream transformations and analysis, taking advantage of BigQuery’s power and scalability.

Useful Links

Salesforce Query Component in Matillion ETL for Google BigQuery Component Data Model OAuth Set Up Integration information VideoBegin your data journey

Want to try the Salesforce.com Query component in Matillion ETL for BigQuery? Request a free demo now, or launch on the Google Cloud Launcher.

Ian Funnell

Data Alchemist

Ian Funnell, Data Alchemist at Matillion, curates The Data Geek weekly newsletter and manages the Matillion Exchange.

Featured Resources

Blog

Data Productivity Cloud Now Offering Stronger Integrations For Databricks Users

Matillions Data Productivity Cloud on Databricks empowers data teams built around Databricks to be more productive and ...

BlogWhat Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

Share: