- Blog

- 10.27.2021

What is Data Extraction? Everything You Need to Know

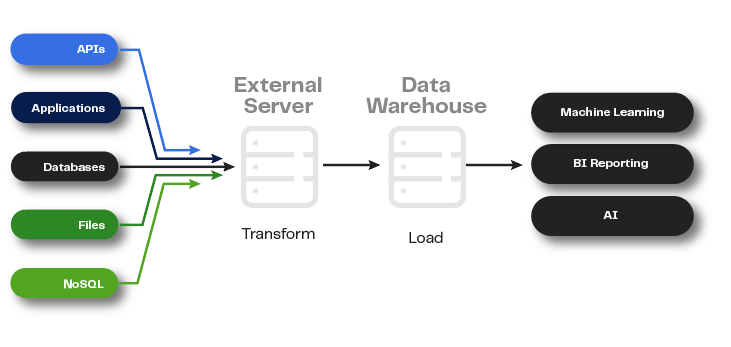

When performing data extraction, organizations retrieve data from various sources–including databases, legacy systems, online transactions, software as a service (SaaS) platforms, web pages, and more–for migration to a centralized repository. The process is the initial step in an extract, transform, and load (ETL) effort, which prepares enterprise data for analytics.

For example, a company seeking to gauge the impact of its brand and its reputation with customers may decide that it needs to analyze online data from social media platforms, sales transactions, and reviews. To mine that data for insight, businesses traditionally first extract it from these different sources, transform it all into a workable format, and load it into a data warehouse.

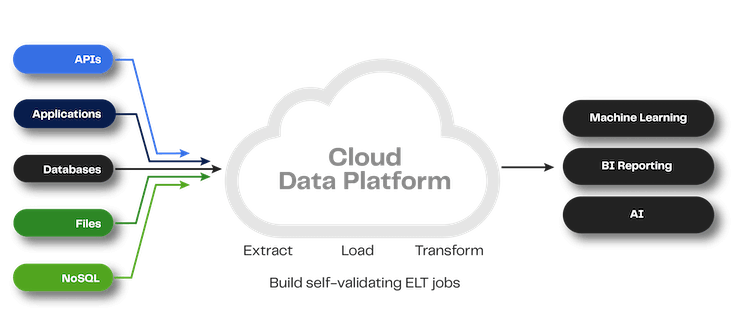

Data extraction is the first step in the ETL or ELT process, which prepares data for the analysis that provides business insight

Or, in the case of a cloud-native product like Matillion, extract the data from the sources, load it into the cloud data platform, and then transform it using the power of the cloud: ELT. In either case, extraction is the first step toward making data useful for analytics, AI/ML, and more.

How is data extraction done?

No matter what sources are involved, the data extraction process typically involves three core steps:

- Identify any changes in your data (for example, new tables or columns added to a database)

- Select parts of your data to be extracted (in the case of a full extraction, this will be the entire dataset)

- Perform the extraction

Data teams can schedule automated data extraction jobs or extract data on demand, as needed. Common data extraction methods include:

Setting and responding to notifications

Many source systems allow you to configure them to issue notifications every time a data record changes. Databases often have a mechanism for this, and SaaS platforms typically offer webhooks to provide similar functionality.

Performing incremental extraction

When data sources are not designed to deliver notifications but can indicate any changes since the last extraction, you can perform an incremental data extraction. Depending on the data source, you may create a change table, check timestamps, or use built-in change data capture (CDC) functionality to identify changes. By extracting just records that have changed, you minimize your system load; however, incremental extraction techniques may not detect deleted records in your source data.

Performing a full extraction

You will perform a full extraction the first time you replicate data from a source. You can also use this method when sources have no way to indicate that data has changed. The logic is simpler, but since full extraction involves larger volumes of data, the system load will also be greater.

Why is Data Extraction Important?

Today, most organizations in most industries will need to extract data at some point. For many businesses, the need arises as part of a larger transition to a cloud platform for data storage and management. For others, data extraction becomes critical for upgrading databases, consolidating systems after an acquisition, or merging data from different business units.

Companies implement automated data extraction solutions so that they can:

Make informed decisions

To tap into business insight for faster, better decision making, enterprises start by rapidly extracting raw data from key sources.

Focus staff on high-value activities

Manual processes are extremely demanding and costly in terms of the human resources needed to perform them. With automated data extraction processes, businesses minimize the administrative burden on IT staff, allowing them to devote more time to higher-value tasks.

Minimize error

Incomplete, inaccurate, and duplicate information is inevitable when employees enter data into systems manually. By deploying automated data extraction solutions, companies reduce error in their business-critical data.

Boost productivity

Manual data entry isn’t just time-consuming and prone to error–it’s a repetitive task many employees don’t relish. Many organizations find that freeing workers to focus on their main duties and more strategic projects is a great boon to both individual and overall productivity and better for business..

What are the Types of Data Extraction?

In the broadest terms, organizations extract two types of data:

Unstructured data

Unstructured data isn’t stored in a standardized–or structured–database format. Both human- and machine-generated unstructured data is abundant. Common examples include audio, email geo-spatial, sensor, and surveillance data, often coming from the Internet of Things (IoT). To extract unstructured data, companies first need to perform data preparation and cleaning tasks like removing duplicate results, deleting extra symbols, and determining how to handle missing values.

Structured data

Structured data is in a standardized format and is managed within a transactional system. Rows in a SQL database table are an example of structured data. When working with structured data, organizations typically perform the extraction process within a source system.

Companies can extract a vast range of different data–both structured and unstructured–to meet their business needs. But typically, the types of data extracted fall within three categories:

Operational Data

Many organizations extract data related to routine tasks and processes to better understand outcomes and improve operational efficiency.

Customer Data

Businesses often extract customer names, contact information, purchase histories, and more for marketing and advertising purposes.

Financial Data

Extracting metrics like sales numbers, purchasing costs, and competitor prices can help companies track performance and conduct strategic planning.

Types of Data Extraction Tools

Tools for data extraction include:

Batch processing

Batch processing tools extract data in large consolidated jobs, or batches. Demanding a large amount of compute power, these tools often perform data extraction during a company’s off hours.

Open source

Typically available free of charge, open source tools can be a good choice for an organization with a limited budget and the right IT knowledge to use the tools effectively.

Cloud

The latest generation of tools, cloud offerings excel at rapid, automated data extraction. Typically deployed as part of a larger cloud ETL solution, these platforms allow enterprises to take advantage of scalable storage and analytics while offloading concerns about handling security and compliance in house.

Want to Learn More About Data Extraction?

There’s big potential in big data. But your business can only unlock its value if you have the right technology, starting with tools for extracting data from your sources quickly and efficiently.

At Matillion, we offer data integration tools that are purpose-built for extraction, transformation and loading processes in the cloud.

Find out more about our pre-built data source connectors for ingesting data, our Universal Connector that enables you to bring virtually any data into the cloud, Matillion ETL for end-to-end data integration and transformation, and Matillion Data Loader, our data ingestion tool for effortlessly loading system data into cloud environments. Or learn more about how to unlock the power of your data by requesting a demo of Matillion ETL.

Featured Resources

What Are Feature Flags?

Feature flags are a software development tool that has the capability to control the visibility of any particular feature. ...

BlogHow Your Data Teams Can Do More With Marketing Analytics

Improve your marketing analytics with Matillion Data Productivity Cloud that enables businesses to centralize and integrate ...

BlogThe Importance of Data Classification in Cloud Security

Data classification enables the targeted protection and management of sensitive information. Personally Identifiable ...

Share: